What AI ethics covers

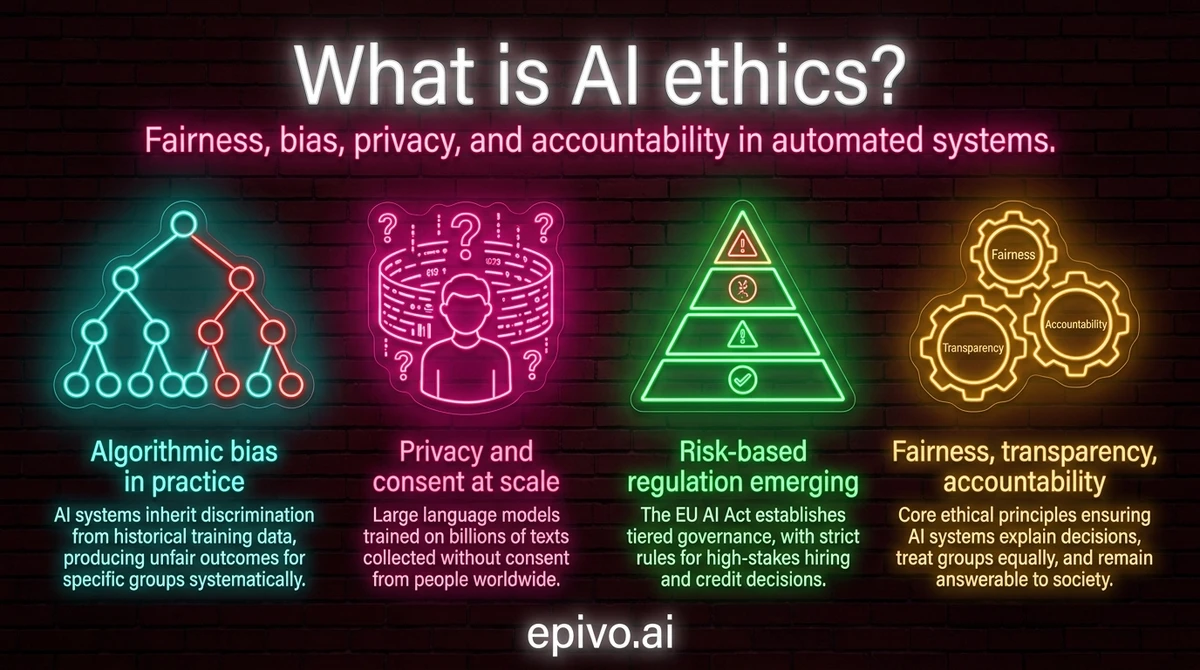

AI ethics is not a single rule or regulation — it is a cluster of interconnected concerns that arise whenever an automated system makes or informs a decision that affects people.

Fairness asks whether an AI system treats individuals and groups equitably. A hiring algorithm that screens out women at a higher rate than men fails the fairness standard even if no one intended it to discriminate.

Accountability asks who is responsible when an AI system causes harm. If a bank's credit-scoring model rejects a qualified applicant based on a flawed signal, it matters greatly whether the bank, the model's vendor, or the regulator is responsible for the outcome.

Transparency asks whether the reasoning behind a decision can be understood and explained. Many modern AI systems, particularly deep learning models, produce outputs without any interpretable explanation — a property sometimes called the black-box problem. When a patient is denied coverage or a job offer is withdrawn, opacity makes redress almost impossible.

Privacy concerns how personal data is collected, stored, and used in training and operating AI systems. Safety covers the risk that an AI system behaves in unintended or dangerous ways, from a self-driving vehicle misclassifying a pedestrian to a medical-diagnosis tool recommending the wrong treatment.

These five concerns — fairness, accountability, transparency, privacy, and safety — form the working vocabulary of AI ethics. Organisations such as the AI Now Institute have documented how failures in each area produce real-world harm, particularly for communities already facing systemic disadvantage.

Algorithmic bias: how discrimination is encoded

Algorithmic bias occurs when an AI system produces systematically unfair outcomes for particular groups. It usually originates in training data — because historical records often encode past discrimination, a model trained on that data will reproduce and sometimes amplify it.

The consequences can be serious. In 2019, a study published in Science revealed that a healthcare algorithm widely used across US hospitals assigned lower risk scores to Black patients relative to their true health needs, effectively reducing their access to care. The algorithm had been trained on historical spending data, which reflected longstanding disparities in treatment, not the actual severity of illness.

Hiring tools have shown similar patterns. Amazon famously scrapped an AI-powered recruitment tool after discovering that it consistently downgraded resumes from women. The system had learned from ten years of predominantly male hiring decisions and treated male-coded language as a signal of quality.

Facial recognition is another documented problem area. Independent audits — including research published by MIT Media Lab — found that commercial facial recognition systems had error rates up to 34 percentage points higher for darker-skinned women compared with lighter-skinned men, despite similar overall accuracy headlines.

Bias auditing has emerged as a response: a systematic process of testing AI systems for disparate outcomes across demographic groups before and after deployment. Some jurisdictions now require it. The NIST AI Risk Management Framework provides guidance on how organisations can structure bias evaluation as part of a broader risk management process. Crucially, auditing treats bias detection as an ongoing operational requirement rather than a one-time pre-launch check.

Privacy, surveillance, and intellectual property

AI systems at scale consume enormous quantities of data — and much of that data involves people who did not consent to its collection or use in this way.

Large language models are trained on text scraped from the public internet, including personal blogs, forum posts, professional profiles, and journalistic archives. Image-generation systems are trained on hundreds of millions of photographs, many taken from photographers and illustrators without permission or compensation. Legal challenges from publishers, artists, and journalists are making their way through courts in multiple jurisdictions, raising unresolved questions about what copyright law permits when training data is used at this scale.

Facial recognition compounds the privacy concern. Clearview AI built a database of more than 30 billion scraped images to power a facial recognition service sold to law enforcement, without obtaining consent from the individuals pictured. Several regulators in Europe and Canada found this violated data protection law. The episode illustrated how AI can transform publicly visible information into a surveillance instrument without any deliberate act by the people being tracked.

Deepfakes — AI-generated synthetic media that realistically depicts real people saying or doing things they never said or did — raise a related set of harms. They have been used to generate non-consensual intimate imagery, to spread AI-generated misinformation and deepfakes, and to manipulate public perception during elections. Detection tools exist but consistently lag behind generation capabilities.

For organisations, digital privacy obligations intersect directly with AI: if a system processes personal data as part of its operation, the full requirements of GDPR or equivalent legislation apply — including data minimisation, purpose limitation, and the right to explanation when automated decisions produce significant effects.

Did you know?

-

A 2019 study in Science found that a widely used US healthcare algorithm systematically undertreated Black patients — assigning them lower risk scores than equally sick white patients, affecting roughly 200 million people annually.

Obermeyer et al. (2019) — Dissecting racial bias in an algorithm used to manage the health of populations, Science -

MIT Media Lab researchers found that commercial facial recognition systems misclassified the gender of darker-skinned women up to 34.7% of the time, compared with an error rate of 0.8% for lighter-skinned men.

Buolamwini & Gebru (2018) — Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification, MIT Media Lab -

The EU AI Act, which entered into force in August 2024, classifies AI systems used in hiring, credit scoring, and law enforcement as high-risk and requires mandatory conformity assessments before deployment.

EU AI Act — Official Text and Implementation Timeline

AI regulation and what responsible use looks like in practice

The ethical questions raised by AI have moved firmly into the regulatory arena. The EU AI Act is the most comprehensive framework to date. It establishes a risk-tiered approach: AI applications considered unacceptable — such as real-time biometric surveillance in public spaces and social scoring by governments — are prohibited outright. High-risk applications, including those affecting employment decisions, credit access, and criminal justice, must meet stringent requirements for transparency, human oversight, and technical documentation before they can be deployed in European markets. Lower-risk systems face lighter-touch obligations, primarily around disclosure.

In the United States, an executive order on AI issued in 2023 directed federal agencies to develop sector-specific guidance and required developers of the most powerful AI systems to share safety test results with government before public release. The approach is more fragmented than the EU model, with different standards emerging across healthcare, financial services, and defence.

For organisations, responsible AI use is increasingly a matter of operational risk management as much as ethics. A biased hiring tool can generate employment tribunal liability. A data-hungry customer service AI can produce GDPR violations. An opaque credit algorithm can violate the Equal Credit Opportunity Act. The Partnership on AI has published practical frameworks for governance that translate principles into process: appointing accountability owners, establishing model documentation standards, running bias audits before deployment, and creating clear channels for affected individuals to raise concerns.

For individuals, responsible use starts with knowing what AI systems are operating on your behalf or making decisions about you — and exercising the rights to access, explanation, and contestation that data protection law in many jurisdictions already provides.

AI ethics is not a destination. As capabilities advance and applications multiply, the questions evolve. What makes the field practically valuable is not a fixed answer to every dilemma, but the habit of asking — before deployment, not after — who is affected, what could go wrong, and who will be accountable when it does.

Frequently asked questions

- What is AI ethics in simple terms?

- AI ethics is the study of how AI systems should be designed, used, and governed to avoid causing harm. It covers questions of fairness, transparency, accountability, privacy, and safety — asking not just whether an AI system works, but whether it is right to use it in a given context.

- What are examples of AI bias?

- A healthcare algorithm that assigned lower risk scores to Black patients than equally sick white patients. A hiring tool trained on historical data that downgraded resumes from women. Facial recognition systems with significantly higher error rates for darker-skinned individuals. Bias typically originates in unrepresentative or historically skewed training data.

- Who is responsible for ethical AI?

- Responsibility is shared. Developers must design systems with fairness and transparency in mind. Organisations deploying AI are responsible for how it affects their customers and employees. Regulators set minimum standards and enforce them. The trend in law and governance is toward holding deploying organisations — not only vendors — accountable for outcomes.

- What is the EU AI Act?

- The EU AI Act, in force since August 2024, is the world's first comprehensive AI regulation. It classifies AI applications by risk level — prohibited, high-risk, and lower-risk — and sets requirements for transparency, human oversight, and safety testing. High-risk applications include those affecting hiring, credit, and law enforcement.

- How can organisations use AI responsibly?

- Responsible use involves: identifying which AI systems affect people, conducting bias audits before deployment, maintaining clear documentation of model decisions, ensuring human oversight for high-stakes outcomes, establishing a process for affected individuals to raise concerns, and assigning clear accountability owners for each AI system in use.

- What is an AI ethics policy?

- An AI ethics policy is an internal document that defines an organisation's principles and procedures for AI use — covering how AI can and cannot be used, who is accountable, how bias and errors will be identified, and how the organisation will respond when an AI system causes harm. Leading frameworks from the Partnership on AI provide templates.