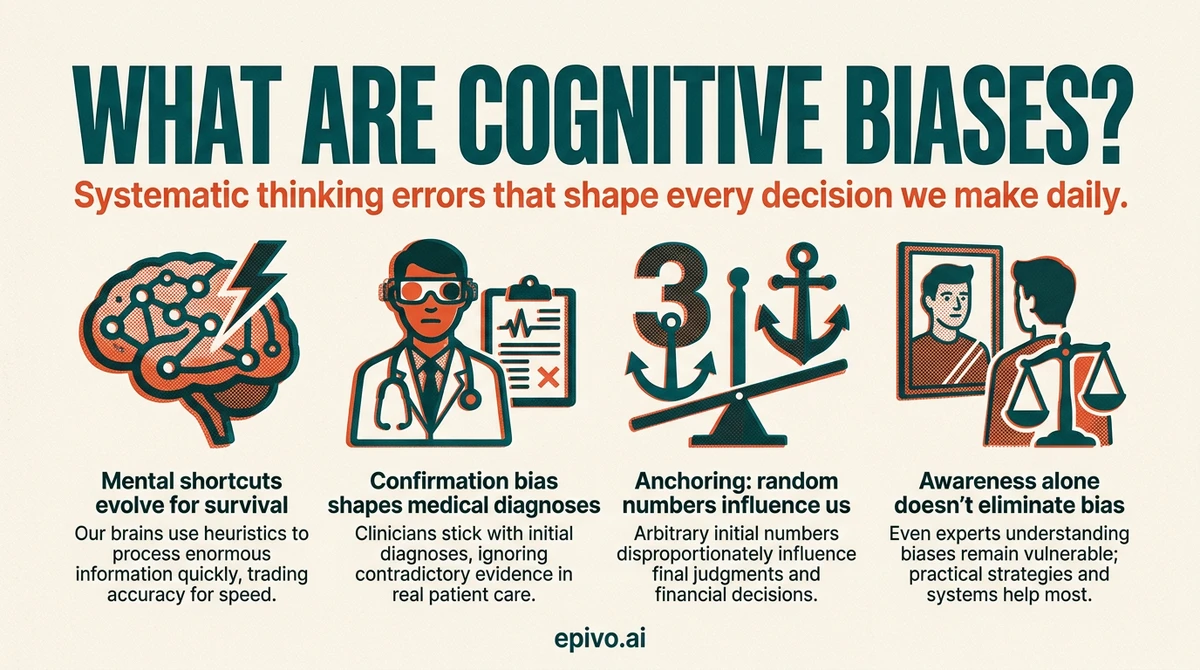

Why our brains take shortcuts

The human brain processes an enormous amount of information every second. To cope, it relies on heuristics — quick mental rules of thumb that allow us to reach judgments without exhaustively analysing every option. Most of the time, these shortcuts work well enough. But they also introduce predictable, systematic errors known as cognitive biases.

The psychologists Daniel Kahneman and Amos Tversky spent decades mapping these errors. Their foundational research, summarised in Kahneman's landmark book Thinking, Fast and Slow (2011), describes two modes of thought. System 1 is fast, automatic, and largely unconscious — it handles routine judgments instantly. System 2 is slow, deliberate, and effortful — it engages when problems require careful analysis.

Cognitive biases arise mainly in System 1. When we judge something quickly, we are drawing on experience, emotion, and pattern recognition rather than systematic reasoning. For everyday tasks this is efficient; for complex decisions it can lead us badly astray.

Evolutionarily, many biases made sense. Overestimating threats kept our ancestors alive. Trusting familiar people and ideas reduced social risk. But in a modern world of global information, financial markets, and complex institutions, these same shortcuts can distort our understanding of reality. Practising critical thinking helps us notice when System 1 is leading us in the wrong direction.

The most common cognitive biases explained

What are cognitive biases, exactly? Researchers have documented over 180 distinct types — some obscure, others shaping nearly every decision we make. Understanding the major ones builds a foundation for better judgment.

Confirmation bias

Confirmation bias is the tendency to seek out, favour, and remember information that confirms what we already believe — while discounting evidence that challenges it. Psychologist Raymond Nickerson's comprehensive 1998 review found this bias appears across scientific reasoning, legal judgment, and everyday conversation. It explains why people with opposing political views can read the same article and both feel vindicated. Recognising confirmation bias requires deliberately seeking out strong counterarguments, not just reassuring ones.

Anchoring bias

Anchoring occurs when the first piece of information we encounter — the 'anchor' — disproportionately influences all subsequent judgments. In a classic 1974 experiment by Tversky and Kahneman, participants who spun a wheel landing on 65 guessed that Africa made up about 45% of United Nations members; those whose wheel landed on 10 guessed only 25%. The number on the wheel was entirely arbitrary, yet it shaped their estimates. In everyday life, anchoring affects salary negotiations, retail pricing, and medical diagnosis.

The availability heuristic

We judge how likely something is by how easily an example comes to mind. After seeing news coverage of plane crashes, people overestimate the danger of flying and underestimate the far greater danger of driving. This heuristic makes vivid, memorable events feel more probable than quiet, statistically common ones.

The Dunning-Kruger effect

In a 1999 study, psychologists Justin Kruger and David Dunning found that people with limited knowledge or skill in an area consistently overestimate their competence — partly because the very skills needed to recognise good performance are the same skills needed to produce it. The effect also has a mirror: genuine experts often underestimate how much they know relative to novices. This dynamic shapes education, workplace culture, and public debate about complex topics.

Framing effect

How a choice is presented changes how we evaluate it. 'This surgery has a 90% survival rate' and 'this surgery has a 10% mortality rate' describe identical situations, yet people respond to them differently. Kahneman and Tversky showed that people are generally more motivated by avoiding losses than by achieving equivalent gains — a phenomenon they called loss aversion.

Understanding these biases connects directly to the traditions of philosophy, which has long examined how we form beliefs and how we can reason more reliably.

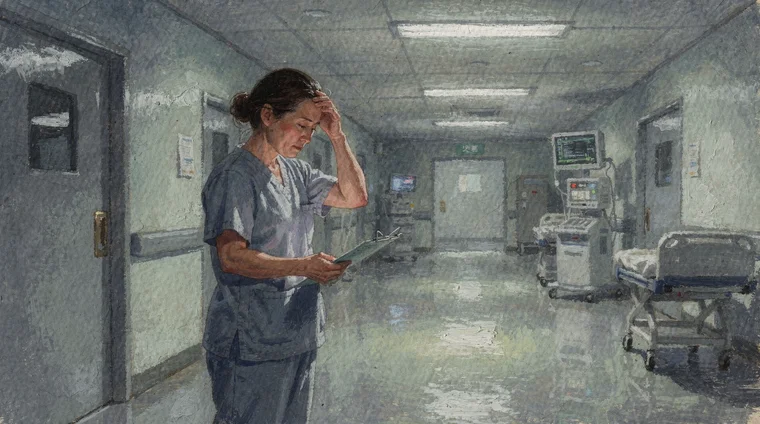

How cognitive biases affect real-world decisions

Cognitive biases are not merely academic curiosities. They shape consequential decisions in medicine, finance, law, and public policy.

In medicine, confirmation bias can lead clinicians to stick with an initial diagnosis even as contradicting evidence accumulates. Anchoring on a patient's first presented symptom can cause doctors to miss a different underlying condition. Studies of emergency medicine have shown that systematic debiasing training — explicitly prompting clinicians to consider alternative diagnoses — reduces diagnostic error.

In financial markets, the availability heuristic drives investors to chase recent winners and flee recent losers, often buying high and selling low. Anchoring explains why people hold onto falling stocks, anchored to the price they originally paid rather than the stock's current value. Behavioural economist Richard Thaler, whose work built on Kahneman and Tversky's research, won the Nobel Memorial Prize in Economic Sciences in 2017 for documenting how these patterns shape market behaviour.

In the courtroom, eyewitness testimony is highly susceptible to bias. Memory is reconstructive, not reproductive — it is shaped by leading questions, subsequent information, and the confidence of the witness. Anchoring in sentencing decisions has been documented: judges who roll a higher number on a loaded die have been shown to recommend longer sentences for identical offences.

In everyday life, biases influence which news we share, how we evaluate job candidates, and how we respond to health information. Awareness alone reduces their grip somewhat — but the most effective mitigation strategies involve structural changes: checklists, diverse teams, pre-mortems, and deliberate consideration of alternatives.

How to recognise and reduce cognitive biases

Knowing that cognitive biases exist is not sufficient to eliminate them — research shows that even experts who understand biases thoroughly remain vulnerable to them. But awareness, combined with practical strategies, meaningfully improves decision quality.

Slow down deliberately. Many biases arise from System 1 fast thinking. When a decision is important, pause and engage System 2. Ask: what evidence supports this conclusion, and what evidence contradicts it? What would I think if the situation were framed differently?

Seek disconfirming evidence. Actively search for information that challenges your current view. This is the direct antidote to confirmation bias. Techniques like 'steelmanning' — constructing the strongest possible version of an opposing argument — make this concrete.

Use reference classes. For predictions about the future, look at the base rate of similar past events rather than relying only on the specifics of your current case. This corrects the planning fallacy, the common tendency to underestimate how long projects will take.

Build in structured reflection. Pre-mortems — imagining that your decision has already failed and working backwards to identify why — help surface assumptions and risks that optimism bias would otherwise suppress.

Diversify your information sources. Algorithms on social media exploit the availability heuristic and confirmation bias by feeding us more of what we already engage with. Deliberately reading sources that challenge your assumptions broadens your information diet.

Work with others. Group deliberation, when structured well, catches individual biases that solo thinking misses. The key is ensuring that diverse perspectives are genuinely heard, not filtered through the dominance of the loudest voice.

None of these strategies eliminates bias entirely. But building habits of careful, critical thinking is the most reliable path to more accurate judgment over time.

Did you know?

-

In Kahneman and Tversky's 1974 anchoring experiment, an arbitrary random number influenced participants' estimates of African UN membership by as much as 20 percentage points.

Judgment under uncertainty: Heuristics and biases (Science, 1974) -

Kruger and Dunning (1999) found that participants who scored in the bottom quartile on logical reasoning tests estimated their performance to be above average — a direct demonstration of the Dunning-Kruger effect.

Unskilled and unaware of it (Journal of Personality and Social Psychology, 1999) -

Confirmation bias is described by psychologist Raymond Nickerson as 'a ubiquitous phenomenon in many guises' — appearing in scientific reasoning, legal judgment, and everyday decision-making alike.

Confirmation bias: A ubiquitous phenomenon in many guises (Review of General Psychology, 1998)

Cognitive biases, philosophy, and the examined life

The study of cognitive biases connects to one of philosophy's oldest preoccupations: how do we know what we know, and how can we reason well? Socrates famously argued that the unexamined life is not worth living. The heuristics-and-biases research tradition, pioneered by Kahneman and Tversky from the 1970s onward, is in many ways an empirical answer to the same question.

Where ancient philosophers relied on argument and introspection, cognitive scientists use experiments and data. But both traditions converge on a humbling conclusion: human reasoning is far more fallible than we naturally assume. We are not the rational agents that classical economic theory once supposed. We are pattern-matching animals whose mental shortcuts were shaped by evolutionary pressures that predate written language, let alone the internet.

This insight does not license despair. It licenses humility — and careful institutional design. Behavioural economics, nudge theory, and evidence-based policy all draw on our knowledge of biases to design environments in which people are more likely to make decisions that reflect their own considered values rather than their reflexive impulses.

At the individual level, studying cognitive biases is an act of intellectual self-awareness. It belongs alongside logic, epistemology, and ethics as part of a rigorous education in how to think. Students who understand these biases are better equipped to evaluate arguments, engage with evidence, and navigate a world saturated with information engineered to exploit the very shortcuts described in this article. The question of what are cognitive biases — and how they undermine clear reasoning — is therefore as philosophical as it is psychological.

Frequently asked questions

- What are cognitive biases in simple terms?

- Cognitive biases are predictable errors in thinking caused by mental shortcuts the brain uses to process information quickly. They cause us to misjudge probability, distort memories, and favour information that confirms what we already believe, often without realising it.

- How many cognitive biases are there?

- Researchers have catalogued over 180 distinct cognitive biases, though many overlap. The most studied include confirmation bias, anchoring, the availability heuristic, the Dunning-Kruger effect, and the framing effect.

- Can you get rid of cognitive biases?

- Not entirely. Even experts who study biases remain vulnerable to them. However, deliberate strategies — slowing down decisions, seeking disconfirming evidence, using checklists, and working with diverse teams — significantly reduce their impact.

- What is confirmation bias?

- Confirmation bias is the tendency to seek out and favour information that supports your existing beliefs, while ignoring or discounting evidence that contradicts them. It is one of the most pervasive biases documented in psychological research.

- What is the Dunning-Kruger effect?

- The Dunning-Kruger effect is the finding that people with limited knowledge in a domain tend to overestimate their competence. This happens because the skills needed to recognise good performance are the same skills required to produce it.

- Why do cognitive biases matter for students?

- Cognitive biases affect how students evaluate sources, interpret exam questions, and form opinions on complex topics. Understanding them supports stronger critical thinking, better academic writing, and more thoughtful engagement with information and argument.