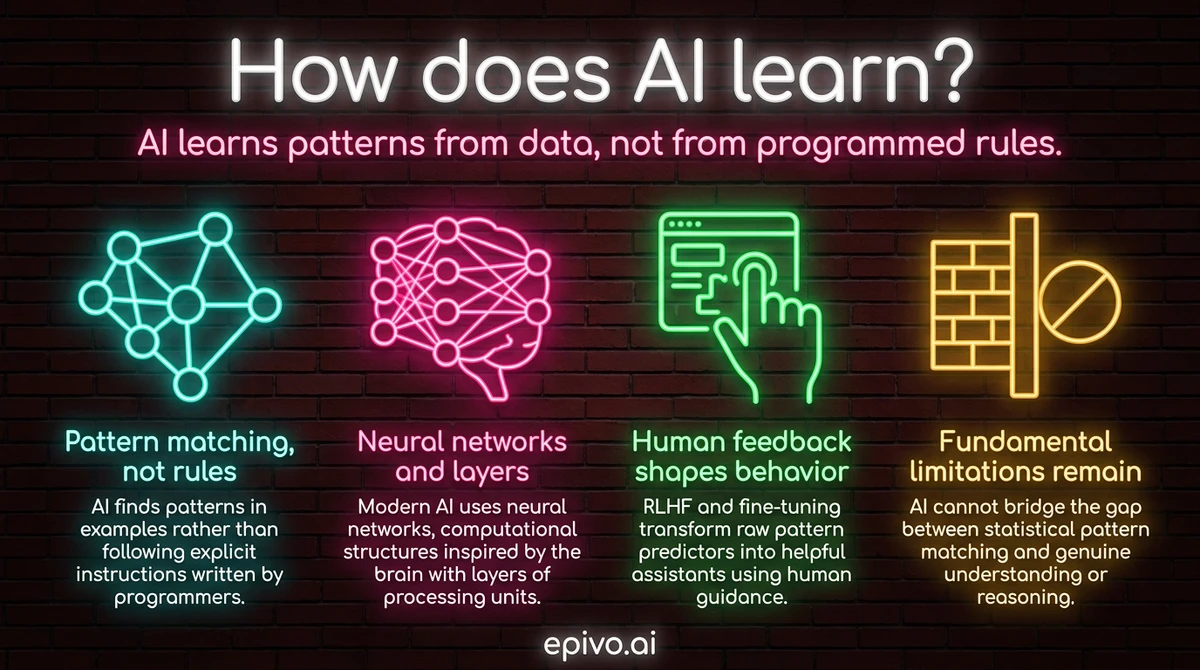

Training on data: finding patterns in examples

The first thing to understand about how AI learns is that it does not follow a set of rules written by programmers. A traditional software application is explicit: if the customer clicks this button, run this function. Every behaviour is hand-coded.

AI systems work differently. They are exposed to enormous quantities of examples — text, images, audio, numerical data — and they adjust their internal parameters until they can reliably predict what comes next, or what category something belongs to. The rules are never written down. They emerge from the data itself.

This process is called machine learning. The two most common flavours are supervised and unsupervised learning.

In supervised learning, the training data includes the right answers. An AI trained to classify customer support emails as urgent or routine is shown thousands of examples already labelled by humans. It learns to recognise the signals — word choice, sentence length, the presence of words like "broken" or "refund" — that correlate with urgency. After enough examples, it can classify new emails it has never seen.

In unsupervised learning, there are no labels. The AI is given raw data and must find structure on its own. It might discover that customers naturally fall into three behavioural groups, even though no one told it to look for groups. This is how recommendation engines find content clusters and how anomaly detection systems identify unusual transactions.

Both approaches share the same core idea: the AI does not receive instructions about what to look for. It discovers what is useful by being exposed to enough examples. This is why the quality and quantity of training data matters so much — the AI can only learn what the data contains.

Neural networks and deep learning

The mechanism behind most modern AI is the neural network — a computational structure loosely inspired by the brain. A neural network consists of layers of simple processing units called neurons. Each neuron receives numerical inputs, applies a transformation, and passes its output to the next layer.

The first layer processes raw input — pixels in an image, or word tokens in a sentence. Middle layers, called hidden layers, progressively extract more abstract features. The final layer produces the model's prediction or output. A network with many hidden layers is called a deep neural network — hence the term deep learning.

The crucial question is: how does the network learn which transformations to apply? The answer is gradient descent, the core algorithm of modern AI training. At the start, the network's internal parameters (called weights) are set randomly. It makes a prediction, and that prediction is compared against the correct answer. The gap between prediction and reality is the error.

Gradient descent works backwards through the network, calculating how much each weight contributed to the error, and nudging every weight slightly in the direction that would have reduced it. This process — called backpropagation — repeats millions or billions of times across the training data. Gradually, the weights converge on values that make accurate predictions.

Scale matters enormously. A network trained on a thousand examples learns something useful but limited. A network trained on hundreds of billions of words across a significant fraction of the written internet learns something qualitatively different — it develops broad, flexible representations of language, reasoning, and knowledge. This is why the jump from early language models to systems like GPT-4 felt like a phase change rather than an incremental improvement.

RLHF and fine-tuning: from pattern predictor to helpful assistant

A language model trained purely on internet text learns to predict what words come next in a document. This is useful but not the same as being helpful. A raw model trained this way might complete a question with more questions, or mimic the style of a toxic forum thread, because those patterns exist in the training data.

Transforming a pattern predictor into a system like ChatGPT or Claude requires an additional step called instruction tuning followed by reinforcement learning from human feedback (RLHF). Understanding this step helps explain why how ChatGPT generates responses often feels qualitatively different from earlier AI systems.

Instruction tuning involves fine-tuning the model on a curated dataset of (instruction, response) pairs — examples of a user asking something and a human expert writing a high-quality answer. This teaches the model to interpret requests and respond to them directly, rather than continuing text in the style of whatever it was pre-trained on.

RLHF goes further. Human raters compare different model outputs and indicate which ones are better — more accurate, more helpful, less harmful. A separate model is trained to predict these human preferences. This preference model is then used as a reward signal to further adjust the main language model through reinforcement learning. The language model is nudged toward outputs that human raters tend to prefer.

The result is a model that is not merely predicting likely text — it is producing text that has been shaped by human judgement about what good responses look like. This is why modern AI assistants follow instructions, decline harmful requests, and maintain a coherent persona. These behaviours are not programmed explicitly; they are the outcome of training on human preference signals.

Did you know?

-

GPT-4 was trained on an estimated 1 trillion or more tokens of text — roughly the equivalent of millions of books — requiring thousands of specialised AI chips running for months.

MIT Technology Review — The inside story of how ChatGPT was built -

According to Stanford's 2024 AI Index, the training compute for frontier AI models doubles roughly every six to twelve months, driving the rapid capability gains seen since 2020.

Stanford HAI — AI Index Report 2024 -

Anthropic's Constitutional AI method extends RLHF by having the model critique its own outputs against a set of principles — reducing harmful responses without requiring as many human labels.

Anthropic — Constitutional AI Research

What AI still cannot learn

Understanding how AI learns also means understanding where it breaks down. These are not temporary engineering problems — they reflect fundamental gaps between statistical pattern matching and genuine understanding.

AI systems struggle with common-sense reasoning about the physical world. A language model knows that glass is fragile because that association appears countless times in text. But it has no sensorimotor experience of dropping things. Its knowledge is inferential and statistical, not grounded in the kind of embodied experience that makes a human automatically reach for a plastic cup when their hands are wet.

AI cannot reliably distinguish correlation from causation. It can predict that patients who take a certain drug recover faster, but it cannot determine whether the drug caused the recovery or whether healthier patients are simply more likely to be prescribed it. Causal reasoning requires a model of how the world works, not just patterns in data about what tends to co-occur.

AI also has no stable sense of time, self, or agency. A generative AI system does not know what it knows or doesn't know — it generates plausible text and can fabricate confident-sounding claims about things it has never encountered. This is the source of hallucination: the model is doing what it was trained to do (produce likely next tokens) even when it has no reliable signal to draw on.

For professionals, these limitations have practical implications. AI tools work best when the task is well-defined and there is a clear signal in training data about what good looks like — writing assistance, summarisation, code generation, pattern recognition in structured data. They are less reliable when the task requires genuine causal judgment, novel physical reasoning, or accurate self-assessment of confidence. Knowing which category your task falls into is one of the most useful things you can take from understanding how AI learns.

Frequently asked questions

- How does AI learn from data?

- AI learns by adjusting millions of internal parameters — called weights — based on exposure to large quantities of examples. Each time it makes a wrong prediction, an algorithm called gradient descent nudges the weights slightly toward the correct answer. After billions of adjustments, the model becomes accurate.

- What is training in the context of AI?

- Training is the process of running data through an AI model, measuring how far off its predictions are, and using that error signal to update the model's internal parameters. It is repeated millions of times until the model's predictions match the training data reliably enough to generalise to new examples.

- Can AI learn on its own after training?

- Most deployed AI models are static — their weights are fixed after training and do not update as users interact with them. Learning happens in distinct training runs, not continuously. Some systems are periodically retrained on new data, but the day-to-day AI you interact with is not learning from your conversations.

- Does AI actually understand what it learns?

- Not in the way humans do. AI learns statistical associations between patterns in data. It can produce impressively correct outputs without having a causal model of the world, physical intuitions, or genuine comprehension. This is why AI can write fluently about topics and still make nonsensical factual errors.

- What is deep learning?

- Deep learning is machine learning using neural networks with many layers — typically dozens to hundreds. Each layer learns progressively more abstract representations of the input. Deep learning is responsible for most recent AI breakthroughs, including image recognition, language models, and speech synthesis.

- How is AI different from a rules-based system?

- A rules-based system follows explicit instructions written by a programmer. An AI system derives its behaviour from patterns in training data — the rules are never written down; they emerge automatically. This makes AI more flexible for complex tasks but less predictable and harder to audit than hand-coded logic.