What ChatGPT actually is

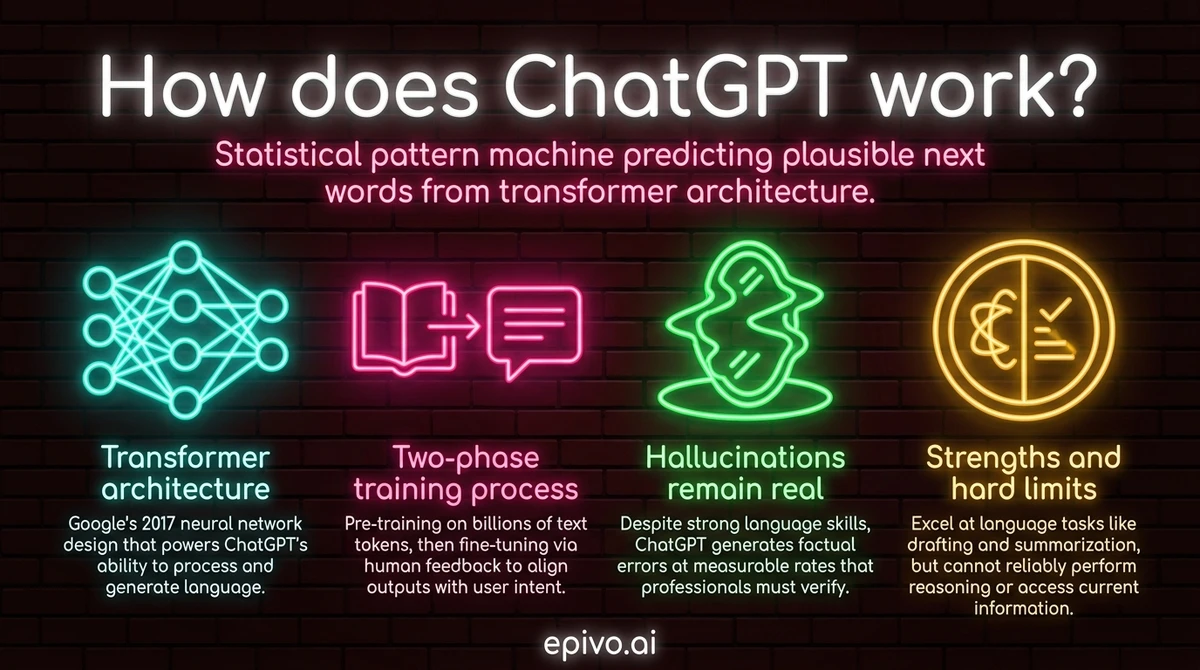

ChatGPT is a large language model (LLM) — a type of AI system built on a neural network architecture called the transformer, introduced by Google researchers in 2017. At its simplest, it is a very large mathematical function that takes text as input and predicts what text should come next.

To understand what generative AI is, it helps to understand what a language model actually does. During training, the model processes enormous quantities of text — web pages, books, articles, code repositories — and learns statistical patterns: which words, phrases, and ideas tend to follow which others, and in what contexts. By the end of training, the model has encoded billions of these associations in its parameters.

When you send ChatGPT a message, it does not retrieve a pre-written answer from a database. It generates a response one token at a time — a token being roughly a word or word-fragment — predicting at each step which token is most probable given everything that came before. The result reads like fluent human writing because fluent human writing was what it was trained on.

This is not reasoning in the way humans reason. It is sophisticated pattern completion at enormous scale. That distinction matters enormously for how you interpret its output.

How the training works

ChatGPT's capabilities come from two distinct training phases, each shaping the model in different ways.

The first phase is pre-training. The model is exposed to a vast corpus of text — hundreds of billions of words — and trained to predict missing or next tokens. This is computationally intensive, requiring thousands of specialised processors running for weeks or months. By the end, the model has a broad, general command of language and a wide knowledge base derived from its training data. This is how machine learning works at industrial scale: the model adjusts its internal parameters with each prediction error until it becomes reliably accurate.

The second phase is fine-tuning using a technique called reinforcement learning from human feedback, or RLHF. Human trainers rate model responses for quality, helpfulness, and safety. Those ratings are used to train a separate reward model, which then guides the LLM to produce responses that humans rate more highly. This is why ChatGPT behaves differently from a raw language model: it has been shaped to be helpful, to decline harmful requests, and to adopt a conversational tone rather than simply continuing text in the statistical style of its training corpus.

RLHF is also why the model can feel almost like a person to talk to. It has been optimised extensively for human approval — which is useful, but also means it can be confidently wrong in ways that feel convincing.

What ChatGPT can and cannot do

Understanding the gap between ChatGPT's strengths and limitations is the most practically useful thing a professional can take away from this article.

ChatGPT performs well at language tasks: drafting, summarising, editing, explaining concepts in different styles, translating, generating code, and brainstorming. These tasks align closely with what it was trained to do — produce plausible, fluent text that matches a given prompt.

It performs poorly at tasks that require reliable factual accuracy, formal mathematical reasoning, or up-to-date information. The hallucination problem is real: because the model predicts plausible text rather than retrieving verified facts, it routinely generates incorrect information with high confidence. Citations may be fabricated. Statistics may be wrong. Dates and names may be plausible but inaccurate. This is not a bug that will be fully fixed — it is a consequence of the underlying architecture.

The model also has no memory between conversations by default, no persistent understanding of your organisation or context, and no ability to access real-time information unless connected to external tools. Its knowledge has a training cutoff date, beyond which it knows nothing.

For professionals, the practical implication is clear: use ChatGPT as a skilled first-drafter and thinking partner, not as a fact source. Verify anything that matters independently. Treat its confident tone as a feature of its training, not evidence of accuracy.

Did you know?

-

ChatGPT reached 100 million users within two months of launch in late 2022 — the fastest consumer technology adoption ever recorded at that point.

Reuters — ChatGPT sets record for fastest-growing user base -

A 2023 Stanford HAI AI Index report found that large language model performance on professional exams — including the bar exam and medical licensing — had improved dramatically, with GPT-4 passing the bar exam in the top 10% of test-takers.

Stanford HAI — AI Index Report 2023 -

Despite strong language capability, LLMs still hallucinate factual errors at measurable rates. A 2023 study found that ChatGPT produced incorrect citations in over 60% of legal research queries tested.

MIT Technology Review — AI and hallucination in legal research

Practical implications for professionals

Knowing how ChatGPT works changes how you use it — and how you evaluate the output you get back.

The most effective professional users of AI tools treat them the way a good manager treats a capable but junior employee: they brief clearly, check the work, and verify anything consequential before it leaves the building. Understanding how to prompt AI effectively is a direct application of understanding the model's architecture — clarity and specificity in your prompts help the model produce better completions.

For knowledge work specifically, the key disciplines are:

Verify facts before citing them. The model's confident tone reflects training, not accuracy. Any statistic, date, legal reference, or technical claim should be independently confirmed before you rely on it or share it.

Use it for structure and speed, not for authority. ChatGPT excels at turning a rough idea into a structured draft, generating multiple framings of an argument, or reducing a long document to its key points. These are acceleration tasks, not source tasks.

Be explicit about context. The model has no knowledge of your organisation, your clients, your constraints, or your audience unless you provide that context in the conversation. The more relevant context you include, the more useful the output.

Treat each conversation as stateless. By default, the model remembers nothing from previous sessions. Relevant background — who you are, what you are working on, what constraints apply — needs to be re-established each time unless you are using a tool that provides persistent memory.

The professionals who get the most value from AI tools are not those who trust them most — they are those who understand what the tools are actually doing and calibrate their use accordingly. That understanding starts with knowing how does ChatGPT work at a mechanical level, and ends with knowing what that means for the reliability of what it produces.

Frequently asked questions

- What is ChatGPT?

- ChatGPT is a large language model (LLM) developed by OpenAI. It generates text responses by predicting the most probable next token given the conversation so far, drawing on patterns learned from a massive training corpus of human-written text.

- How does ChatGPT generate text?

- It generates text one token at a time, using a transformer neural network to predict which token is most probable next given everything that came before. The process repeats until the response is complete. There is no retrieval from a database — each word is predicted fresh.

- Why does ChatGPT sometimes get facts wrong?

- Because it predicts plausible text rather than retrieving verified facts. It has no internal truth-checker. If a confident-sounding but incorrect claim fits the statistical pattern of its training data, it will produce that claim. This is called hallucination and affects all current LLMs.

- How is ChatGPT different from a search engine?

- A search engine retrieves existing documents that match a query. ChatGPT generates new text based on patterns in its training data. It does not look anything up in real time. This makes it more flexible for language tasks but unreliable as a source of current or verified information.

- Is it safe to use ChatGPT at work?

- It depends on what you share. Anything you type into ChatGPT may be used to improve the model unless you have opted out or are using an enterprise plan with data controls. Do not paste confidential documents, client data, or proprietary information unless your organisation has a reviewed enterprise agreement in place.

- What are the main limitations of ChatGPT for professional use?

- The main limitations are hallucination (confident factual errors), a training data cutoff (no knowledge of recent events), no persistent memory between sessions, and no access to your organisation's internal information. It is strongest as a drafting and thinking tool, not as a research or verification tool.