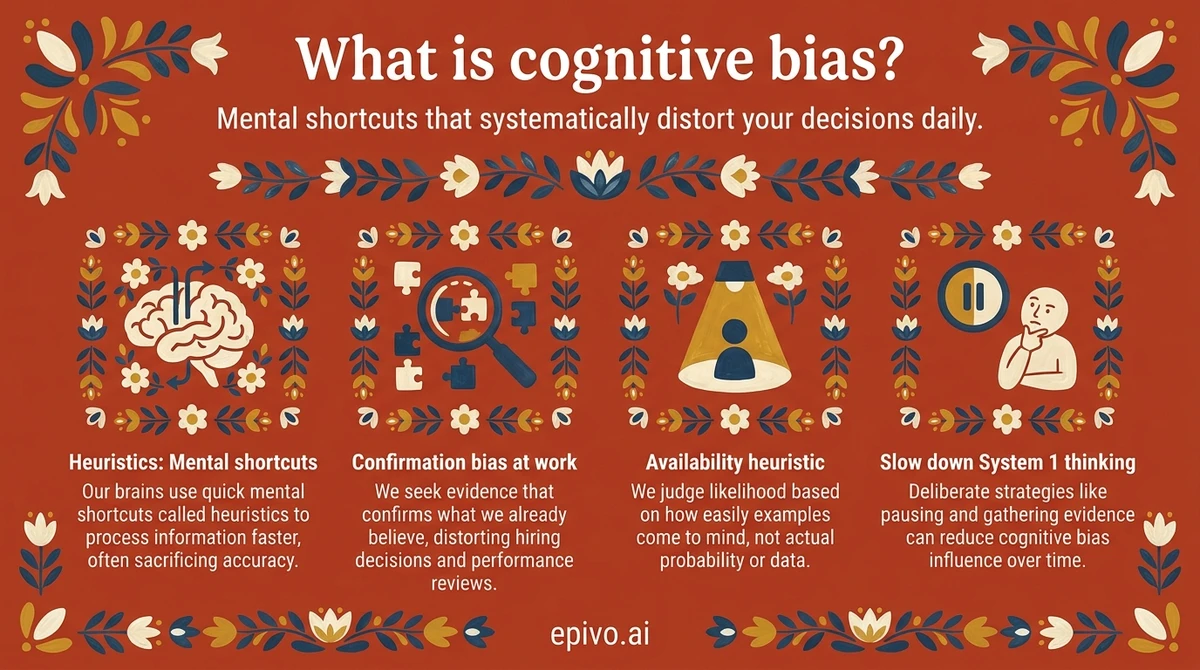

What is a cognitive bias?

A cognitive bias is a systematic pattern of deviation from rational judgment. Rather than weighing all evidence objectively, our minds take mental shortcuts called heuristics. These usually serve us well but can lead to predictable errors.

The modern scientific understanding of cognitive bias was shaped by psychologists Daniel Kahneman and Amos Tversky. In their landmark 1974 paper in Science, they showed that people rely on a small number of heuristic principles to assess probabilities and predict values. These shortcuts reduce complex tasks to simpler judgments — and in doing so, introduce systematic and predictable biases.

System 1 and System 2 thinking

Kahneman later organised these findings into a framework in his 2011 book Thinking, Fast and Slow. He described two modes of thought. System 1 is fast, automatic, and intuitive. It operates below conscious awareness and handles most of our daily decisions. System 2 is slow, deliberate, and analytical. It requires conscious effort and deals with complex reasoning.

Cognitive biases mostly arise from System 1. Our fast-thinking brain is efficient but pattern-prone. It fills in gaps, reaches for the most vivid example, and sticks with the first number it hears. Knowing this is the starting point for thinking more clearly.

Explore our psychology and decision-making curriculum to go deeper on this topic.

Common types of cognitive bias

Kahneman and Tversky identified dozens of cognitive biases in their research. Three are especially relevant to professional life.

Availability heuristic

The availability heuristic leads us to judge the probability of an event by how easily examples come to mind. If you can quickly recall a plane crash, you overestimate the danger of flying — even though car journeys are statistically more dangerous. In the workplace, this means recent or dramatic failures often receive disproportionate weight in risk discussions, while gradual or invisible risks get underestimated.

Anchoring effect

The anchoring effect occurs when the first number we hear unduly influences our subsequent judgments. In a classic experiment, participants who were shown a high random number before estimating the population of a city gave significantly higher estimates than those shown a low number. In salary negotiations, the first figure mentioned — by either side — acts as an anchor that pulls the final number towards it. Awareness alone does not fully neutralise anchoring; its pull is surprisingly persistent.

Confirmation bias

Confirmation bias is the tendency to search for, interpret, and recall information in a way that confirms our existing beliefs. When evaluating a new strategy, we instinctively gravitate toward evidence that supports it and discount evidence that challenges it. As Kahneman and Tversky showed, this is not a matter of intelligence or education — it is a structural feature of how human cognition works.

See What is positive psychology? and What is emotional intelligence? for related research on how we think and feel under pressure.

How cognitive biases affect decisions at work

Cognitive bias does not stay in the laboratory. It shapes hiring decisions, strategic choices, performance reviews, and everyday team interactions.

Hiring and performance reviews

Confirmation bias can lead hiring managers to favour candidates who resemble past successes, filtering out potentially stronger applicants. In performance reviews, the availability heuristic means recent events — a strong finish to the quarter or a single visible mistake — carry more weight than a full year of work.

Anchoring in negotiations and budgets

When setting budgets or negotiating contracts, the first figure on the table becomes an anchor. Teams that do not explicitly challenge initial estimates tend to converge on numbers that are too close to that starting point. Structured processes — such as independent estimates before group discussion — reduce this effect.

Loss aversion and strategic inertia

Research by Kahneman, Knetsch, and Thaler showed that the pain of a loss typically feels roughly twice as intense as the pleasure of an equivalent gain. This asymmetry — known as loss aversion — can cause organisations to cling to failing projects far longer than is rational. The fear of 'losing what we have invested' overrides the case for switching course.

For professionals, understanding these patterns is not an academic exercise. It is practical intelligence. Visit For parents to see how Epivo teaches these skills to the next generation.

Did you know?

-

Daniel Kahneman won the Nobel Prize in Economics in 2002 for his work with Amos Tversky on decision-making under uncertainty — the research that gave us the modern science of cognitive bias.

Nobel Prize Committee — Daniel Kahneman Facts -

Tversky and Kahneman's 1974 Science paper on heuristics and biases has been cited over 40,000 times, making it one of the most influential papers in the history of social science.

Tversky & Kahneman (1974) — Judgment under Uncertainty -

The 1979 prospect theory paper by Kahneman and Tversky has been cited over 60,000 times — the most cited article in the history of Econometrica and a foundational text in behavioural economics.

Kahneman & Tversky (1979) — Prospect Theory

How to reduce cognitive bias

You cannot eliminate cognitive bias — it is wired into how the human brain works. But you can reduce its influence with deliberate strategies.

Slow down System 1

The most reliable debiasing technique is simply to engage System 2. When a decision matters, pause. Ask: what evidence would change my mind? What would someone who disagrees with me say? This interrupts automatic thinking and creates space for more deliberate analysis.

Seek disconfirming evidence

To counter confirmation bias, actively look for information that challenges your current view. In team settings, assign someone the explicit role of devil's advocate. This is not about being contrarian — it is about stress-testing a position before committing resources to it.

Use pre-mortems

A pre-mortem is a structured exercise in which a team imagines that a project has already failed and works backwards to identify what went wrong. Developed by psychologist Gary Klein, this technique surfaces concerns that loss aversion or overconfidence would otherwise suppress.

Watch for anchors

Before entering a negotiation or budget discussion, form your own independent estimate. Write it down. Then, when an anchor is introduced by the other party, you have a reference point that is genuinely independent — making it easier to resist the pull.

Build checklists and structured processes

For high-stakes decisions, checklists and structured decision frameworks are among the most evidence-based tools available. Atul Gawande's research on checklists in medicine and aviation shows that systematic process reduces error rates even among highly trained experts. The same principle applies to professional decision-making.

Explore the psychology of decision-making curriculum to build these skills systematically with a personal AI tutor. You can also read more in How to build good habits — which covers related research on behaviour change.

Frequently asked questions

- What is cognitive bias in simple terms?

- A cognitive bias is a systematic tendency to think or judge in ways that deviate from what pure logic or evidence would support. They arise from mental shortcuts the brain uses to process information quickly. Most are the result of System 1 — fast, intuitive thinking — operating in situations that would benefit from slower, deliberate analysis.

- What are the most common cognitive biases?

- The most widely studied cognitive biases include confirmation bias (favouring information that confirms existing beliefs), the availability heuristic (judging probability by how easily examples come to mind), anchoring (over-relying on the first piece of information encountered), and loss aversion (feeling losses more intensely than equivalent gains). All were identified or formalised in the work of Kahneman and Tversky.

- Can cognitive bias be eliminated?

- No — cognitive biases cannot be fully eliminated because they arise from fundamental features of how the brain processes information. However, their influence can be significantly reduced. Strategies include slowing down decisions, actively seeking disconfirming evidence, using structured processes and checklists, and working in environments that build in checks against groupthink.

- Why did Daniel Kahneman win the Nobel Prize?

- Daniel Kahneman won the Nobel Prize in Economics in 2002 for his work with Amos Tversky on human judgment and decision-making under uncertainty. Their research demonstrated that people systematically deviate from the rational-actor model assumed by classical economics — founding the field of behavioural economics and giving us the modern science of cognitive bias.

- How does cognitive bias affect the workplace?

- Cognitive bias influences hiring decisions, performance reviews, strategic planning, negotiations, and risk assessment. Confirmation bias skews how managers evaluate candidates and strategies. Anchoring distorts budget and salary negotiations. Loss aversion causes teams to stick with failing projects too long. Awareness of these patterns, combined with structured processes, measurably improves decision quality.

- What is the difference between a heuristic and a cognitive bias?

- A heuristic is a mental shortcut or rule of thumb that simplifies complex judgments. A cognitive bias is the systematic error that results when a heuristic is applied in a context where it does not work well. Heuristics are often useful — they allow fast decisions with limited information. Cognitive bias is the predictable downside: the cases where the shortcut leads reasoning astray.