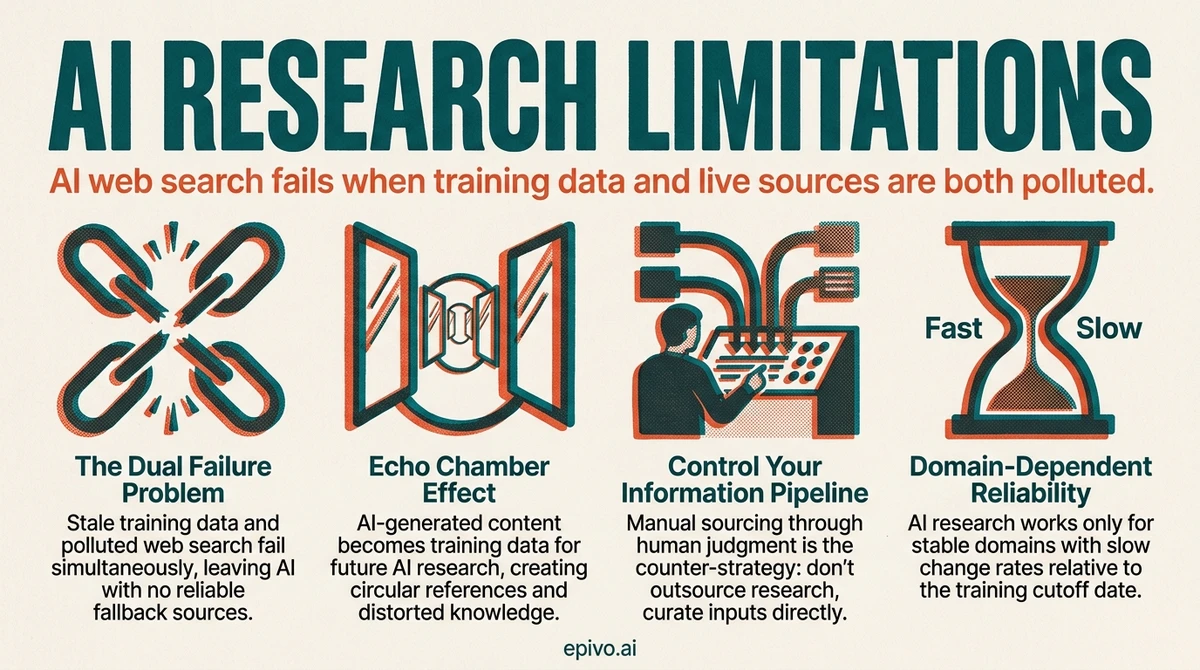

The dual failure: stale training data meets polluted web

Every AI model has a knowledge cutoff — a date after which it knows nothing. For any topic that changed after that date, the model draws on outdated information. It does not know what it does not know. It presents stale facts with the same confidence as current ones.

Web search was supposed to compensate. Modern AI tools can query the web, read the results, and incorporate fresh information. The problem is what the web now contains.

A growing share of online content is generated by AI systems. Content farms produce thousands of SEO-optimised articles daily. These articles are engineered to rank highly in search results, not to convey accurate information. They use the right keywords, the right structure, and the right length — because they were generated by systems that have learned exactly what search engines reward.

When your AI tool searches for current information, this is what it often finds first. It reads these articles, treats them as sources, and synthesises their content into a response. The result sounds authoritative. The sourcing is not.

This creates a compounding failure. The training data is stale. The web results are polluted. The two mechanisms that could compensate for each other instead both fail in the same direction. The AI sounds confident because it found consistent information across multiple sources — but consistency among AI-generated sources does not indicate accuracy. It indicates that the same patterns have been widely reproduced.

The echo chamber and the epistemological filter

The circular reference problem is worst in fields where AI is both the researcher and the subject. Every AI-generated article about AI becomes potential source material for future AI research about AI. Bad advice gets repeated until it looks like consensus.

But the problem extends beyond AI-specific topics. In any fast-moving field — regulation, market analysis, technology trends — the web fills quickly with commentary, opinion pieces, and summaries that cite each other rather than primary sources. AI tools treat all of these as equally valid references.

There is a deeper issue. Even when AI finds legitimate research — a genuine peer-reviewed paper published last month — it evaluates that paper through the lens of its training data. The model's understanding of the field was frozen at its cutoff date. It interprets new findings using outdated frameworks. A paper that contradicts the training data may be downweighted or mischaracterised, because the model's priors push it toward what it already believes.

This is the epistemological filter. The 2026 research paper is real. The AI's interpretation of it is filtered through a 2025 understanding of the field. The synthesis looks current. The analytical framework is not.

The practical consequence: you cannot trust AI to tell you what is new and what it means. It can tell you what its training data contained. It can tell you what the web says today. But it cannot reliably distinguish between authoritative and generated, between current and stale, between primary and derivative.

Image: Generated with AI (Epivo)

What to do instead: control the information pipeline

The counter-strategy is straightforward. Do not outsource sourcing to AI. Control the information pipeline yourself.

This means finding authoritative references through human judgment and feeding them into context as a context dump. Textbooks. Peer-reviewed papers. Official documentation. Government databases. Primary sources from domain experts with verifiable track records.

The AI then does what it does best. It synthesises. It finds patterns across the documents you provided. It identifies connections between sources you might have missed. It structures the information into frameworks you can use.

This division of labour matches the actual capabilities of the technology. AI is a synthesis engine, not a fact-finding engine. It excels at processing and connecting information that has been provided to it. It fails at independently verifying whether what it finds online is accurate, current, or even human-authored.

The builder who feeds five authoritative papers into context and asks for a synthesis gets a dramatically better result than the builder who asks AI to research the topic independently. The first approach controls the quality of inputs. The second outsources quality control to a system that cannot reliably perform it.

This does not mean AI research is always useless. It means you must know when it is reliable and when it is not.

Image: Generated with AI (Epivo)

When AI research is good enough

Not every topic requires manual sourcing. The reliability of AI research depends on how fast the domain changes relative to the training data cutoff.

Reliable for AI research: - Established science (physics, chemistry, biology fundamentals) - Historical events and analysis - Well-documented programming languages and frameworks (stable APIs) - Classic business strategy and management theory - Literary analysis and cultural commentary

Unreliable — source manually: - Current regulations and legal requirements - Market conditions, pricing, and competitive landscape - Technology that is evolving rapidly (AI tools, new frameworks, recent APIs) - Any field where AI itself is the subject (prompt engineering, AI best practices) - Current events and recent developments - Medical or scientific findings published after the training cutoff

The decision criterion is rate of change relative to the training cutoff. For any topic that changes faster than the model's training cycle, manual sourcing is essential. For stable domains where the underlying knowledge has not changed in years, AI research is a reasonable starting point — though verifying key claims against a primary source before building on them remains good practice.

The most dangerous zone is topics that feel stable but are not. Tax law changes annually. Building codes vary by jurisdiction and update regularly. Drug interactions and treatment guidelines evolve with new research. If the consequences of being wrong are significant, source manually regardless of perceived stability.

Image: Generated with AI (Epivo)

Frequently asked questions

- Does giving AI web search access solve the knowledge cutoff problem?

- Partially, but it introduces new problems. The web itself now contains a growing share of AI-generated content, SEO-optimised filler, and echo-chamber material. AI tools with web access can find current information, but they cannot reliably distinguish between authoritative and generated sources. Web search expands the boundary of what AI can access while reducing the average quality of what it finds.

- How can I tell if a source AI found is AI-generated?

- Three signals. First, multiple sources appeared within the same narrow window and say similar things using similar phrasing. Second, none of the sources cite primary research, empirical data, or named practitioners with verifiable track records. Third, the advice is generic enough to apply to anything, with no specific examples from actual implementations. When you see these patterns, the source material is likely AI-generated.

- Is this problem getting better or worse over time?

- Worse, for now. The volume of AI-generated content on the web is increasing. Search engines are adapting their algorithms, but the incentive structure that rewards SEO-optimised content has not changed. Until search infrastructure develops reliable mechanisms to surface authoritative content over generated filler, the human role in sourcing will remain essential.

- What counts as an authoritative source worth feeding into context?

- Textbooks from established publishers. Peer-reviewed journal articles. Official documentation from the technology or organisation you are working with. Government databases and regulatory publications. Reports from named analysts with verifiable expertise. The key criterion is traceability — can you follow the source back to a person or institution with accountability for its accuracy?