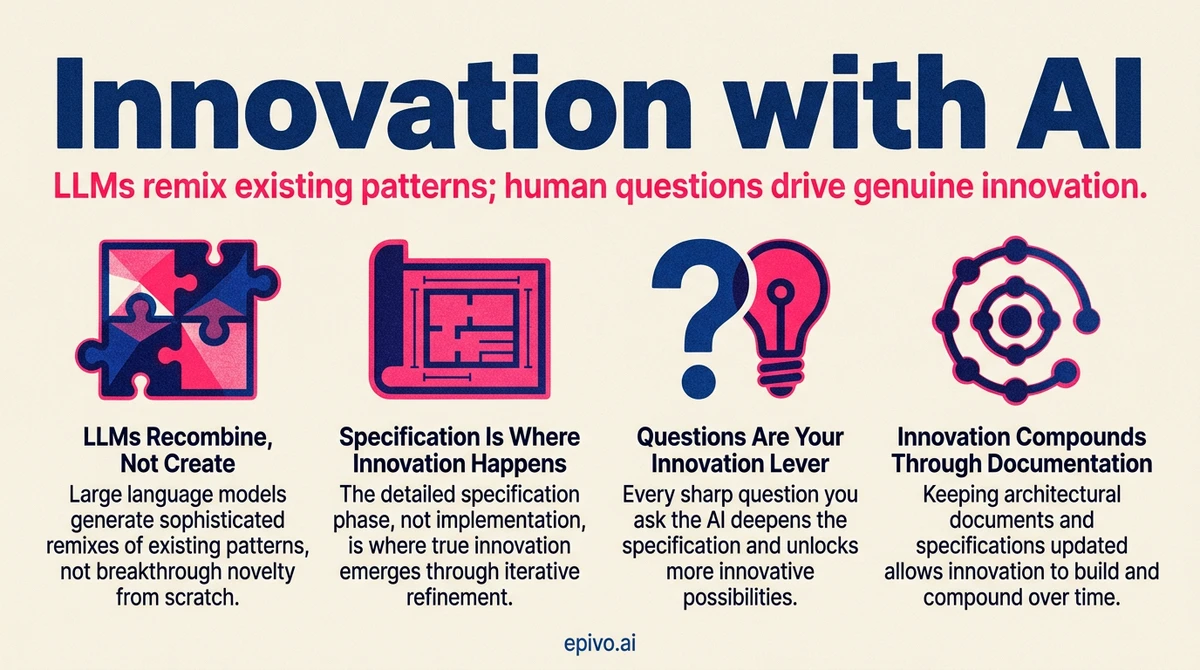

Why LLMs struggle with genuine novelty

Every response an LLM generates is a statistical prediction based on patterns in its training data. When you ask for something innovative, you get a sophisticated remix of ideas that already exist. The specific combination may be new, but every component has precedent.

This is not a bug — it is the nature of the technology. LLMs are pattern-matching engines trained on the sum of existing human knowledge. They cannot step outside that boundary. If you ask an AI for "something nobody has done before," you will receive a confident-sounding arrangement of things many people have done before.

Recognizing this is the first step toward working around it. The builder who understands that AI excels at recombination but cannot produce genuine novelty will use it differently from the builder who expects breakthrough ideas on demand.

Interestingly, this limitation has an upside. Because LLMs have absorbed patterns from every field in their training data, they can combine ideas across domains that no single human expert spans. A builder working on an education product might benefit from patterns the AI absorbed from game design, behavioral psychology, and logistics. Cross-domain pattern transfer is where AI's statistical nature becomes an asset rather than a constraint.

Innovation through iteration

Innovation does not have to arrive as a single breakthrough moment. It can emerge from many small, well-executed steps that each individually follow the book.

Each step the AI suggests may be conventional. It draws on established patterns and proven approaches. But when you iterate through dozens of these steps, guided by a clear vision of the end goal, the cumulative result can be genuinely novel. The innovation lives in the composition, not in any single component.

This is the practical workaround. You hold the vision of what does not exist yet. The AI handles each incremental step using patterns from what does exist. You steer. It builds. After enough iterations, you look up and realise the outcome is something no one has built in quite this way before.

The key is the human role. Your vision, your questions, and your judgment about which direction to take at each fork — these are the ingredients the AI cannot supply. The more questions you ask, the more solid the end solution becomes.

Image: Generated with AI (Epivo)

The innovation specification workflow

When building something ambitious — whether a new product, a complex feature, or a full system — the specification phase is where innovation happens. Implementation is usually the shortest part.

Here is the workflow:

1. Start from the end goal. Explain what you want in terms of the value to be delivered and the user experience to be achieved. Describe what makes your vision unique. Do not start with technical requirements — start with outcomes.

2. Research what exists. Before writing a specification, have the AI research existing solutions in the space. This grounds your innovation in reality and reveals gaps your solution can fill.

3. Think through every problem. Consider all the possible problems you can see in the proposed solution and ask the AI about each one. Have it suggest options for addressing each problem. Either pick the option you prefer, or ask the AI to select whichever is best for your stated end goal.

4. Write the specification or Change Request. Once you have iterated through enough questions and options, ask the AI to formalise everything into a Change Request or system specification.

5. Review with agents. For complex or innovative work, have two agents review the specification rather than one. They will find weaknesses and you will be asked whether to address them. Ask the AI to integrate the feedback it agrees on.

6. Iterate until confidence reaches 8.5. If the reviewers' confidence score is below 8.5 out of 10, ask the AI what needs to be done to reach 8.5. It will tell you, and you can simply ask it to do it. Repeat this for complex specifications until the score clears the threshold.

7. Your share matters. It is essential that you do your part by re-iterating your goal and by thinking through the approach suggested, asking all the questions you have about it. The more questions you ask, the more solid the end solution will be.

The implementation part is usually the shortest step. Once it is done — for complex changes and system specifications — ask the AI to have two agents audit the implementation. Do this a few rounds until the agents do not find much.

Image: Generated with AI (Epivo)

Research existing solutions

What other similar solutions are already out there? Do a deep research online

Validate value delivery

Does the solution really deliver the exceptional user experience intended? Will it deliver the business value intended?

Dual review

Have 2 agents review the cr

Post-implementation audit

Audit the implementation with 2 agents

The human role: asking questions is the innovation lever

The AI cannot innovate for you. What it can do is respond to your questions with extraordinary depth and breadth.

Every question you ask sharpens the specification. Every problem you raise gets addressed. Every option you evaluate narrows the solution space toward the right outcome. The builder who asks fifty questions during specification ends up with a far more robust plan than the builder who accepts the first draft.

This is where the Socratic approach — questions over instructions — proves its value for innovation specifically. You are not telling the AI what to build. You are interrogating the design until it is solid from every angle. The AI's knowledge base is vast. Your questions are what unlock the specific subset of that knowledge relevant to your unique problem.

The paradox resolves itself here. The AI's statistical nature means it knows about everything that exists. Your questions are what direct it toward the specific combination that does not exist yet.

Image: Generated with AI (Epivo)

From single session to parallel tracks

The intensive specification workflow described above is meant for the initial implementation of a solution or for complex changes to an existing system. During this phase, work best in a single chat session so that context builds naturally.

Once the first iteration of the system is built end to end — even if not yet fully working — the dynamic changes. At that point you can start parallel sessions. Report each bug or idea for adjustment in a separate chat session. Each session follows the standard CR workflow autonomously.

This is where speed increases drastically. You can run up to ten separate tracks simultaneously, each one fixing a bug, adjusting a feature, or refining a detail. The intensive single-session phase produces the architecture and the first working version. The parallel phase is what turns that version into a polished product at a pace no traditional team can match.

Image: Generated with AI (Epivo)

Keeping your documentation alive so innovation compounds

After a complex implementation is verified, always ask the AI to ensure that architectural documents and the system specification are up to date. If it does not do this automatically, ask it to add that instruction to your CLAUDE.md file so it becomes a permanent behaviour.

This matters for innovation specifically because innovative systems are, by definition, unlike anything that came before. If the documentation falls behind, the next AI session works from a stale mental model. Stale documentation on an innovative system is worse than no documentation at all — the AI will confidently follow instructions that no longer match reality.

The practice is simple: after every major change, ask the AI to update the docs. Then ask it to add that rule to the project's core instructions. You set the rule once. The AI follows it forever. Your innovation compounds because every session starts with an accurate understanding of what the system currently looks like.

Image: Generated with AI (Epivo)

Documentation maintenance

Ensure that architectural docs are up to date

Frequently asked questions

- Can AI really produce innovative outcomes if it only recombines existing patterns?

- Yes, through iteration. Each individual step may follow established patterns, but the cumulative result of many well-directed steps — guided by a human vision — can produce outcomes that are genuinely novel. Innovation lives in the composition, not in any single component.

- When should I use the 8.5 confidence threshold instead of the standard 7?

- Use the higher threshold for complex changes, full system specifications, and anything where you are building something novel. Novel work has more unknown unknowns, and a higher threshold forces review agents to dig deeper and catch issues that standard review would miss.

- Why use two review agents instead of one?

- Two reviewers approach the specification from different angles. One might focus on technical feasibility while the other evaluates whether the plan delivers the stated business value. For complex or innovative work, the second agent catches systematic blind spots that a single reviewer might share with the author.

- When should I switch from a single session to parallel tracks?

- Once the first iteration of the system is built end to end — even if not fully working — you can start parallel sessions. Report each bug or adjustment in a separate chat session. This can scale up to ten simultaneous tracks, drastically increasing speed.

- Why is the implementation phase the shortest part?

- Because the specification phase front-loads all the thinking. By the time implementation begins, the plan has been stress-tested through multiple review cycles. The AI follows a blueprint that already survived scrutiny, so execution is straightforward.