Why single-step AI generation is not enough

Most people interact with AI through a single prompt-and-response cycle. You ask, it answers, you use the output. For casual tasks that works. For production workflows — where output quality affects customers, revenue, or reputation — it does not.

The problem is fundamental. A single LLM pass has no way to verify its own output. It cannot catch its own hallucinations, notice inconsistencies with your brand voice, or confirm that a translation preserves the meaning of the original. It is operating without a feedback loop.

AI workflow automation solves this by structuring AI tasks as pipelines with multiple steps — where each step builds on, reviews, or corrects the previous one. The result is output that improves at every stage, automatically.

This approach mirrors how high-performing human teams already work. A writer drafts. An editor reviews. A proofreader catches what the editor missed. The difference is that with AI, you can run all three roles in minutes rather than days, and at a cost that makes the economics transformative.

The key insight is that multi-step review consistently outperforms single-step generation. Two review passes after the initial generation catch errors that the generator cannot see — because each review step brings a fresh perspective without the assumptions baked into the original output.

Self-healing pipelines — how AI reviews its own work

A self-healing AI pipeline is a workflow where a second LLM pass reviews and corrects the output of the first. The concept is straightforward, but the impact on quality is dramatic.

Here is how it works in practice. The first AI step generates the initial output — a translation, a piece of content, a data transformation, or any structured task. A second step then takes both the original input and the first step's output, and reviews them together. It checks for accuracy, consistency, tone, completeness, and any domain-specific requirements you define. Where it finds problems, it corrects them.

You can add a third review step for critical workflows. Each additional pass catches a diminishing but real number of remaining issues. In my experience, two review steps after generation hit the sweet spot for most production use cases — the quality improvement from step two to step three is smaller than from step one to step two, but it can still matter for high-stakes content.

The beauty of this pattern is that it is self-healing by design. When the first pass makes a mistake — and it will — the review passes catch and fix it without human intervention. The pipeline produces clean output even when individual steps are imperfect.

This is fundamentally different from running the same prompt twice. The review step has different instructions and a different role. It is not generating — it is auditing. That separation of concerns is what makes it work.

For more on how to structure quality checks across AI-driven projects, see our guide on automated testing for AI projects.

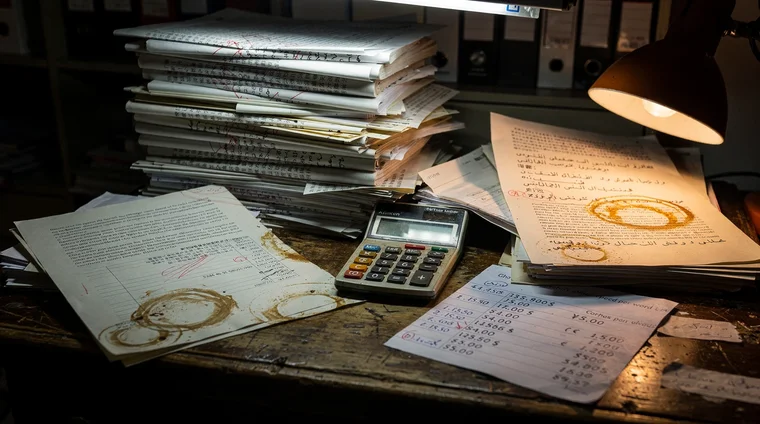

The translation case study — 5,000 pages for $155

The most concrete demonstration of ai workflow automation I can share comes from a website translation project. The task: translate 5,000 pages of content into 12 languages. The traditional cost estimate, at $0.10 per word using professional human translators, came to approximately $300,000.

The AI pipeline cost $155.

That is not a typo. The economics of AI pipelines are not a marginal improvement — they represent a category change. But the cost savings only matter because the quality held up. Here is how the pipeline was structured:

- Step 1 — Generation: A high-capability LLM translated each page, with extensive context about the company, its products, the purpose of each page, and the role of each UI element

- Step 2 — First review: A second LLM pass compared the translation against the original, checking for accuracy, natural phrasing, and preservation of meaning

- Step 3 — Second review: A third pass focused on brand voice, consistency across related pages, and correct handling of technical terms

Three principles made this work:

- Use the best model, not the cheapest. The cost difference between a top-tier model and a mid-tier model is trivial compared to the quality difference. On a project like this, the gap between a $100 bill and a $155 bill is meaningless. The gap between mediocre translations and excellent ones is everything.

- Build extensive context structures. The LLM received a rich JSON context document for every page — what the page is about, where it sits in the navigation, what each UI element does, what the company's tone of voice is. Investing time in creating these context documents pays for itself many times over. Without context, AI translations can be technically correct but catastrophically wrong in tone or meaning.

- Never skip review steps. The temptation is to save money by removing review passes. Do not. The review steps are what transform good-enough output into production-ready quality.

This pattern works for any structured content task — not just translation. Documentation, product descriptions, data processing, report generation — anywhere you need consistent quality at scale, self-healing pipelines deliver.

OpenRouter — cost control and model optimization

When you build production AI pipelines, you need a layer between your application and the LLM providers. OpenRouter serves this purpose well.

OpenRouter is an API gateway that routes your LLM calls to multiple providers — OpenAI, Anthropic, Google, open-source models — through a single integration. Two features matter most for ai workflow automation:

Risk cap. You add credit to your OpenRouter account and spend from that balance. If a runaway process burns through your budget, it stops. You never wake up to an unlimited API bill. This is not theoretical — any automated pipeline that calls LLMs in loops has the potential to spend more than you expect. A prepaid credit model puts a ceiling on the downside.

Automatic model selection. Different tasks in your pipeline need different models. A simple classification step does not need the most powerful (and expensive) model available. A nuanced review step does. OpenRouter lets you route each task to the optimal model for quality and cost. In my projects, I ask Claude to build an admin dashboard showing costs per service, per model, and running totals. This gives just enough visibility to ask useful questions like why did costs spike yesterday? — which almost always leads to improvements in how the pipeline is structured.

The broader principle: quality first, optimize costs later. AI models get cheaper every quarter. The risk of under-spending on quality — producing output that damages your brand or requires manual cleanup — is far greater than the risk of over-spending on a better model. Use what is needed to achieve the goal. Optimize once you have proven the pipeline works.

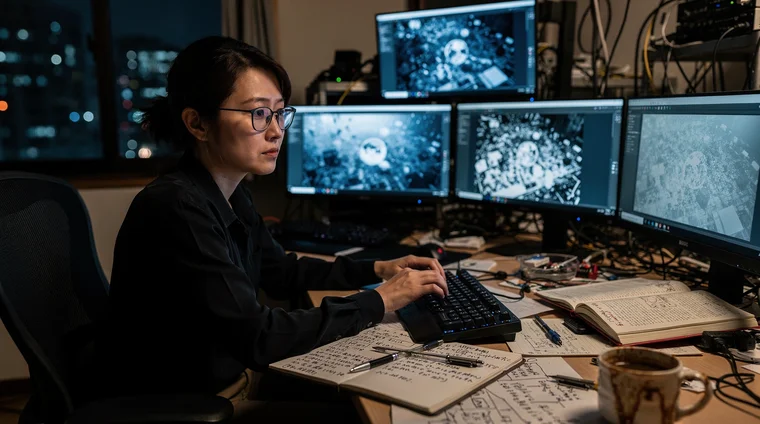

Let AI choose the model — delegate, don't dictate

There is a subtler point beyond spend enough on quality. When you build a multi-step pipeline, your instinct may be to specify which AI model to use for each step. Resist that instinct.

Claude — or whichever AI you use to design the pipeline — has broader knowledge of the model landscape than most builders maintain themselves. It knows the capabilities, typical cost ranges, and trade-offs across dozens of models. And the landscape changes constantly. New releases shift the balance every few weeks. A model that was the best choice last month may be outperformed today.

Your role is to be clear on goals and priorities: quality requirements, acceptable cost range, user experience expectations, latency tolerance. State those. Then let the AI recommend models based on the current landscape.

What you should do is ask probing questions. Will that model handle nuanced tone in twelve languages? Can it reason about visual composition for the review step? Is there a faster option that maintains the same quality bar? These questions sharpen the recommendation without micromanaging the choice.

This is the same principle that applies to technology choices generally: state criteria, not solutions. It applies to model selection too.

For a deeper look at how context structures drive AI output quality, see our article on AI development workflow automation.

Building your first automated AI pipeline

If you are ready to move from single-prompt AI usage to real ai workflow automation, here is a practical starting framework.

Start with a repeatable task. Look for work you do regularly that follows a consistent pattern — translations, content summaries, data extraction, report generation. One-off creative tasks are harder to pipeline. Structured, repeated tasks are where automation shines.

Design the pipeline in three stages:

- Generate — the first LLM call produces the initial output

- Review — a second call evaluates the output against specific criteria you define (accuracy, tone, completeness, formatting)

- Correct — a third call applies fixes identified in the review, producing the final output

Invest heavily in context. Before writing any pipeline code, build the context documents your LLM will need. For a translation task, this means company descriptions, product information, page-level context, and glossaries. For a content task, it means style guides, audience profiles, and examples of good output. The quality of your context directly determines the quality of your output — this is not optional, it is the single highest-leverage investment you can make.

Monitor costs from day one. Route all LLM calls through a gateway like OpenRouter that provides cost visibility. Track costs per pipeline step so you can identify which steps are expensive and whether that expense is justified by quality improvement.

Test with real data. Run your pipeline on actual production inputs, not toy examples. Compare the output against what a human would produce. In most cases, a well-designed three-step pipeline matches or exceeds human quality — and does it in minutes instead of days.

The economics speak for themselves. Once you have experienced the difference between $155 and $300,000 for the same work, single-step AI usage starts to feel like leaving most of the value on the table.

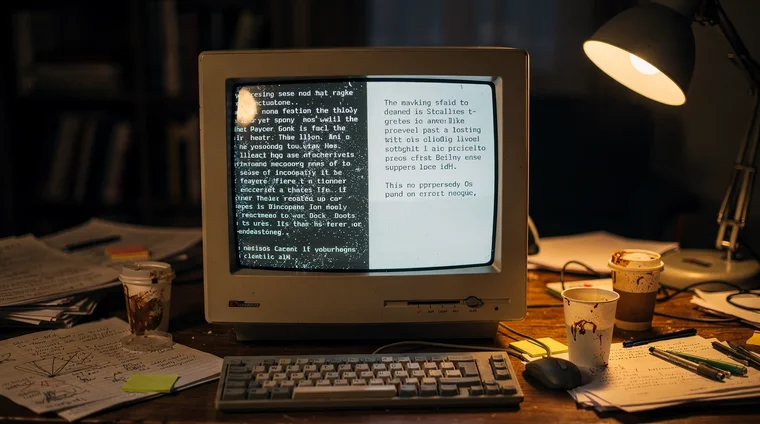

The image generation example — review steps in creative pipelines

Translation is a structured task. But the same multi-step review pattern works for creative output too — and this is where many people underestimate the impact of review steps.

Consider automatic image generation for blog articles. A single-step approach — generate a prompt, call an image API, use whatever comes back — gets you to acceptable but uneven quality. The images will be technically acceptable but often generic, misaligned with the text, or stylistically inconsistent.

A pipeline with review steps transforms the result:

Setup — Define your visual requirements. Before building the pipeline, discuss with Claude what you want: images that feel like they were taken by an experienced photographer, not stock photos. You specify the mood, style, and quality bar. This conversation produces guidelines that every subsequent prompt must follow.

Step 1 — Generate prompts. For each article or content piece, Claude generates image prompts following your guidelines.

Step 2 — Review prompts before generation. A review step checks each prompt against your guidelines. Does it specify the right style? Does it match the tone of the article? Is the composition likely to produce the feel you want? This step catches misaligned prompts before you spend money generating images. In my experience, it lifts prompt quality substantially — catching misalignment that would otherwise waste generation costs.

Step 3 — Generate images. The reviewed prompts go to the image generation API.

Step 4 — Review images against context. A second review step evaluates each generated image alongside the text it should illustrate and your quality requirements. The reviewer has the full picture — the article content, the image, and your standards — and approves or rejects. Rejected images go back to step 2 for a new prompt. Three attempts before flagging failure is a reasonable default — enough to escape bad luck without looping indefinitely.

The critical design choice: the reviewer must have the same context as the generator. An image reviewer that only sees the image cannot judge whether it fits the article. An image reviewer that sees the image plus the article text plus your quality requirements becomes an extremely effective quality gate.

When the rejection rate is high

If your pipeline rejects too many images, that is not a sign to lower the bar. It is a signal that the upstream process needs improvement. The fix is simple: tell Claude the rejection rate is high — how can it be improved? Follow the process. Claude will analyze the rejection patterns, identify what the prompt generation step is getting wrong, and update the guidelines. Each cycle makes the pipeline better.

This feedback loop — measuring output quality and feeding failures back into process improvement — is the difference between a static pipeline and one that gets meaningfully better with each cycle.

Build an image generation pipeline

I want automatic image generation for the blog articles you create. Images should not look like stock photos, but as high quality images with a soul taken by an artistic, experienced photographer. Research what styles and image prompts that would work best for this project. I want the process to review the image generation prompts before generating the images. I also want a review step that looks at the images generated with the text they should be used with and checks if they are suitable and follow my quality requirements or not. The process can do three attempts before failing. Log everything so that we can troubleshoot the process. Follow the process. Once done, do a test and let me see the image and text.

You define the quality bar and the review criteria. Claude designs the pipeline architecture, selects the right models for each step, and builds the feedback loop. The instruction to log everything gives you visibility for future optimization.

Design a pipeline

Set up a system for translation of marketing content such as blogs as well as texts in our app. Ensure that the AI translating everything gets a full understanding of our business and product offer. Read our website and make a summary and use that. Also define a list for common terms so that they get translated the same way each time. When translating texts from our app, ensure that there is a description of what page the text is on, and what the function of that page is so the AI understands the context of the text to be translated. Add 2 review steps. Use high quality AIs for all steps. Use a centralized Openrouter connection for the AI use. Log the cost in the DB so I can visualize it later in the admin view. Write a CR and have it reviewed.

The context investment — website summary, term glossary, page descriptions — is what separates production-quality translation from word-for-word substitution. Claude designs the pipeline architecture; you describe the quality bar.

Frequently asked questions

- What is AI workflow automation?

- AI workflow automation is the practice of structuring AI tasks as multi-step pipelines rather than single prompts. Each step generates, reviews, or corrects output from the previous step, creating a self-improving process that produces higher quality results than any single AI call can achieve.

- What is a self-healing AI pipeline?

- A self-healing AI pipeline adds review steps after the initial AI generation. A second LLM pass reviews the first pass's output for errors, inconsistencies, and quality issues, then corrects them automatically. This means the pipeline produces clean output even when individual steps make mistakes.

- Should I choose which AI model to use for each pipeline step?

- Generally, no. State your priorities — quality requirements, cost constraints, how fast responses need to be — and let Claude recommend models. The landscape changes too fast to track manually, and Claude has broader knowledge of model capabilities and trade-offs than most builders maintain. Your role is to ask probing questions: will that model handle nuanced tone? Is there a faster option at the same quality bar? Optimize costs by structuring your pipeline efficiently, not by downgrading model quality.

- How much can AI workflow automation save compared to traditional methods?

- Savings depend on the task, but the reductions can be dramatic. In one real project, translating 5,000 pages into 12 languages cost $155 using a three-step AI pipeline versus an estimated $300,000 for traditional human translation — a 99.95% cost reduction with comparable quality.

- What is OpenRouter and why use it for AI pipelines?

- OpenRouter is an API gateway that routes LLM calls to multiple providers through a single integration. It provides cost tracking, a prepaid credit system that caps your spending risk, and the ability to route different pipeline steps to different models — using powerful models for complex tasks and efficient ones for simple steps.