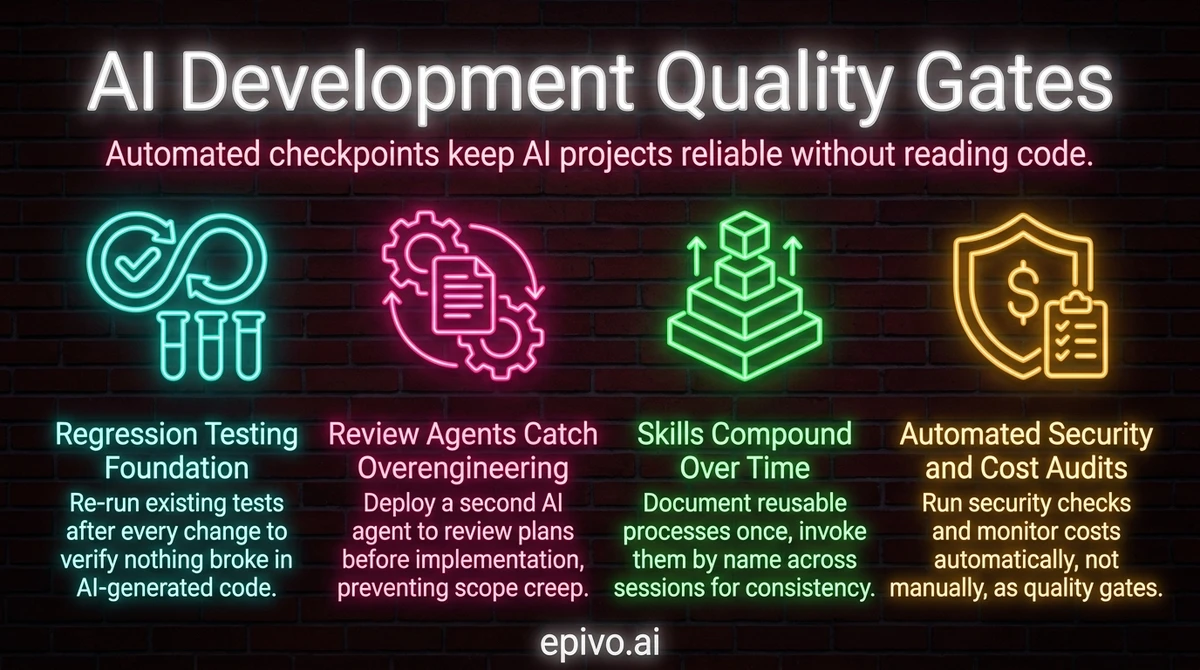

Why quality gates matter more in AI-driven development

In traditional development, a senior engineer reads every pull request and catches problems through expertise. That model does not scale when AI generates hundreds of lines per session, and it does not apply at all if you are a non-coder building with AI.

The alternative is process control — automated systems that verify correctness, security, and maintainability at every stage. I do not review code manually. I have never read a pull request on any of my four production projects. Instead, I rely on a layered set of AI development quality gates that catch problems before they reach users.

The core principle is simple: if a quality check can be automated, it must be automated. Manual review is slow, inconsistent, and creates a bottleneck. Automated gates run every time, miss nothing they are designed to catch, and let you move at the speed AI makes possible.

This is not about lowering the quality bar. It is about enforcing it consistently through systems rather than human attention.

Regression testing — the foundation of every quality gate system

Regression testing means re-running existing tests after every change to verify that nothing broke. It is the single most important quality gate in any AI development project.

On the sonetel.com project, I have over 80 regression tests that run every night. These tests visit pages, measure loading speed, check that content renders correctly, and verify that API integrations still work. They catch issues before any user does.

The principle applies universally: for every system you build, find ways to automate quality checks. Build the measurement process alongside the product, not as an afterthought. When AI generates code, it can also generate tests — and you should ask it to do so for every meaningful feature.

Virtual users take testing further

Beyond traditional regression tests, I use virtual users — synthetic test personas that exercise the system automatically. In the Epivo AI tutor project, virtual students run in batches of twenty across multiple sessions, connecting as if from the mobile app. A dedicated quality monitoring skill evaluates both technical quality and domain-specific quality metrics. This approach catches roughly 85% of issues without any manual testing.

The key insight: virtual users test behavior, not just endpoints. They simulate real usage patterns, which surfaces problems that unit tests miss entirely.

Review agents that catch overengineering and scope creep

Every plan AI produces should be reviewed by a second AI agent before you approve it. This is one of the most valuable AI development quality gates I have adopted.

Here is why: the first draft of a change request usually has serious problems. Not syntax errors or typos — structural issues. Overcomplicated solutions. Unnecessary abstractions. Scope that quietly expanded beyond what you asked for. A spawned review agent reads the plan fresh, with no accumulated assumptions from the conversation that produced it, and catches exactly these problems.

The confidence score system

My review agents rate every plan on a 1-to-10 confidence scale. A score below 7 means the plan needs revision before implementation begins. The agent explains what drove the score down — typically overengineering, missing edge cases, or solutions that are more complex than the problem warrants.

This creates a natural checkpoint. I do not need to understand the technical details of the plan. I need to see that a fresh reviewer gave it a high confidence score and that its concerns were addressed. The review process does the technical evaluation so I can focus on whether the plan solves the right problem.

Two failure modes review agents prevent

AI has two opposing tendencies. It sometimes defaults to quick fixes that create technical debt. Other times, it overengineers — building elaborate architectures when a simple solution would work. Review agents apply downward pressure on overengineering by explicitly looking for and removing bloat. Combined with your upward pressure on quality (asking what is the best long-term solution for the user?), you get solutions that are right-sized for the problem.

Trigger a review agent

Have it reviewed. For higher-stakes changes: Have it reviewed by 2 agents.

Because the review process is defined in the project's memory — spawn a review agent, get a confidence score, incorporate feedback you agree with, target 8–9/10 before implementation — two words trigger the full procedure. Brevity is the point.

Post-implementation review — catching what the first pass missed

Review agents before implementation catch design problems. But a different category of issues only appears after the code is written: wrong field values, duplicate logic, missing validation, overcomplicated patterns that seemed reasonable during implementation. These are execution errors, and they need a separate checkpoint.

The technique is simple. After completing a significant change — anything touching more than a handful of files — type one instruction: review what was implemented with two agents. Two separate agents read the finished code independently. Each starts fresh, with no attachment to the decisions made during implementation. They flag issues the implementing agent missed because it was too close to its own reasoning.

Once both agents report their findings, type three words: fix this, follow the process. Every issue gets resolved in one pass.

For really big changes — foundational architecture, large content batches, schema migrations touching many files — do it twice. The first review-fix cycle catches obvious problems. The second catches subtler issues revealed by the first round of fixes. Each cycle takes minutes. Two cycles cost ten minutes total. Finding the same issues through manual testing or user complaints costs days or weeks.

Not every change warrants this. A one-line fix needs no post-implementation review. A five-file feature change benefits from one pass. A twenty-file architecture change benefits from two. Match review depth to blast radius — how many files changed and how many downstream components are affected. Your judgment about magnitude determines the investment.

Post-implementation review

Review what was implemented with 2 agents.

After both agents report findings: "Fix this. Follow the process." For foundational changes, repeat the cycle a second time.

Review steps beyond code — quality gates for any AI output

Code is not the only AI output worth reviewing. The review patterns described above — pre-implementation and post-implementation review agents — apply to code and change requests. But the same principle works for any AI-generated output: translations, data transformations, generated images, content summaries, extracted information.

The quality arithmetic is consistent across all of these. In my experience, a single AI generation pass produces roughly 70% quality. That is not a failure — it is what the first pass is designed for. Adding one review step — where a second AI pass evaluates the output against the original input and your requirements — lifts that to 80–90%. Adding review steps at multiple stages can push quality even higher.

Three conditions make review steps effective:

The reviewer must have the same context as the generator. A review agent that only sees the output cannot judge whether it meets your goals. It needs the input, the output, and your quality requirements. For example, an image reviewer needs to see the generated image alongside the article text it should illustrate and your standards for visual quality. Without that full picture, the review is guessing.

The reviewer must have different instructions. A review step is not the same prompt run twice. The generator's prompt says create this. The reviewer's prompt says audit this against these criteria. For a translation, the reviewer checks fidelity to the original and brand voice. For an image, it checks alignment with the article text and visual standards. The audit criteria will differ by domain, but the principle holds: separation of concerns catches errors the creator cannot see in its own output.

Rejection signals should flow back upstream. When a review step rejects output at a high rate, that is not a quality gate problem — it is a generation problem. The fix is to show Claude the pattern — the inputs that failed and why — and ask: the rejection rate is high, how can it be improved? Follow the process. Claude identifies what the upstream step is getting wrong and recommends changes. You update the process accordingly. Each feedback cycle makes the pipeline better.

This feedback loop is the difference between a static pipeline and an evolving one that improves with each cycle. It applies equally to automated translation pipelines, image generation workflows, content audits, and any other process where AI generates output that matters.

The compounding math — how 'follow the process' reaches 99%

Let me put concrete numbers on the pipeline. These are approximate figures from operational experience, not a controlled study — but they illustrate why the process works as well as it does.

A first draft has roughly 30% errors. A single review agent, reading with fresh eyes, catches about 70% of those errors. That leaves 9% — quality jumps from 70% to 91%. A second review agent does not double the catch rate because both agents share similar training and overlap on obvious issues. But the second agent still finds errors the first missed. In practice, two review agents together push quality to roughly 95%.

Now implementation begins. Even with a 95%-quality plan, the act of writing code introduces its own mistakes — wrong field values, missed edge cases, logic that drifted from the plan. The resulting code typically has around 10% errors. A post-implementation audit by two agents catches about 90% of those execution errors, leaving roughly 1%. That puts overall quality at approximately 99%.

The residual 1% is where automated regression tests and your manual verification come in.

The beauty of this model is that typing three words — follow the process — triggers the entire chain: draft, review, iterate to confidence, implement, audit, fix, verify. You do not manage each step. The process manages itself. Three words, ~99% quality.

But there is a critical caveat. The old saying goes: the surgery went fine, but the patient died. The 99% measures how well the pipeline executes your intent. If your initial instructions were vague, contradictory, or aimed at the wrong goal, the pipeline will deliver a polished version of the wrong thing. Process quality does not equal outcome quality. Your irreplaceable contribution is asking the right questions, defining clear goals, and verifying that the result actually solves the right problem.

One more constraint: the math applies per reasonable chunk of work — a feature, a bug fix, a refactor. You cannot write a single change request that says "build the entire system" and expect 99% quality. Review agents cannot meaningfully evaluate something that large. Your job is to decompose ambitious goals into pieces the pipeline can handle, then compound progress across many iterations. Each chunk reaches ~99%. Hundreds of chunks build a production system.

When automated quality is low

The rejection rate is high. How can it be improved? Follow the process.

Claude analyzes why the review step is rejecting output and identifies what the upstream generation process is getting wrong. You update the process accordingly. Works on any automated pipeline — translations, images, content, data extraction.

Skills for everything — reusable processes that compound over time

A skill is a documented process that an AI agent can follow repeatedly. Instead of explaining the same workflow every session, you save it once and invoke it by name.

This matters for quality because consistency compounds. Every time a skill runs and you discover an issue, you update the skill. The next run is better. After ten iterations, the process handles edge cases you never anticipated at the start. Skills are not write-once documents — they are living process definitions that improve with every use.

I create skills for every repeated process: reviewing change requests, running audits, summarizing sessions, building curriculum content, running automated tests. Each skill captures hard-won operational knowledge that would otherwise be lost when a session ends and the context window resets.

Why skills beat tribal knowledge

In a traditional team, quality processes live in people's heads. When someone leaves, the knowledge goes with them. Skills externalize that knowledge into a format AI can execute consistently. A new session picks up the skill file and runs the process exactly as designed — no onboarding, no knowledge transfer, no drift.

The meta-pattern: after a round of problems, ask AI to review what went wrong and update the relevant skill. This creates a feedback loop where failures actively improve future quality rather than simply being fixed and forgotten.

Updating a skill

Does the skill need to be updated perhaps?

Said after something goes wrong, not proactively. A working skill needs no changes. When a failure reveals a gap, one question is enough for Claude to identify and patch it.

Security audits and cost monitoring — automated, not manual

Two quality gates that many AI builders neglect are security audits and cost monitoring. Both should be automated.

Automated security and scalability audits

AI can run comprehensive security audits — checking for OWASP vulnerabilities, reviewing authentication flows, scanning for exposed secrets, evaluating scalability bottlenecks. These audits do not require you to understand the security implications yourself. You set the standard ("run an OWASP Top 10 review"), AI executes it, and you read the summary.

The important thing is to make audits part of the process, not a one-time event. Security reviews should run after significant changes, not just before launch.

Cost monitoring via dashboards

When your project uses AI in production — for chat, content generation, translation, or any LLM-powered feature — you need visibility into costs. I route all LLM calls through OpenRouter, which provides a centralized cost-tracking hub. From there, I ask AI to build an admin dashboard showing costs per service, per model, and daily totals.

The dashboard is not for micromanaging spend. It is for asking the right questions. When costs spike, I ask why did we have so much cost yesterday? That question usually leads to improvements in how LLM calls are structured — reducing unnecessary calls, choosing the right model for each task, or fixing a process that ran more times than intended.

This approach —set up visibility, then ask questions about anomalies — is far more effective than trying to manually track costs line by line.

Drift — the hidden failure mode of iterative reviews

There is one failure mode that catches experienced AI builders off guard: focus drift during iterative reviews.

The pattern works like this. You write a change request. You have two review agents evaluate it independently. You incorporate their feedback and iterate. Each cycle improves the plan. Confidence scores climb. Everything looks like it is working.

But somewhere around the fifth or sixth iteration, something subtle happens. The AI gets hung up on implementation details it discovered during reviews. Each iteration slightly shifts attention away from your original goals toward whatever technical tangent the AI has fixated on. After enough cycles, the plan may score highly on technical completeness while no longer serving the purpose you started with.

A CR for a simple notification system gradually becomes an enterprise messaging platform — complete with message queues, retry logic, delivery receipts, and a preference center. The confidence score reads 9.2 out of 10. But the original goal was just to notify users.

The remedy is one question. Periodically ask: Will this align with the goals I expressed initially? This single question snaps focus back to what matters for your solution. High confidence scores measure internal consistency, not alignment with your intent — only you can verify that.

Make this a habit: every three to four review iterations, pause and ask the alignment question before continuing.

Frequently asked questions

- What are AI development quality gates?

- AI development quality gates are automated checkpoints that verify your project's correctness, security, and maintainability at each stage of development. They include regression tests, review agents, security audits, and cost monitoring. The purpose is to maintain code health without requiring manual code review — which is essential when AI generates the code.

- How many regression tests do I need?

- Start with tests for your most critical user flows — the paths that would cause the most damage if broken. You do not need a specific number on day one. What matters is building the habit of adding tests alongside features. Over time, the suite grows naturally. The sonetel.com project reached 80+ nightly tests through this incremental approach.

- What is a review agent confidence score?

- A confidence score is a 1-to-10 rating that a review agent assigns to a plan or change request. It reflects how likely the plan is to succeed without issues. Scores below 7 indicate the plan needs revision — typically because of overengineering, missing edge cases, or scope creep. The score gives non-technical builders a quick way to evaluate plan quality.

- Do I need to understand code to set up quality gates?

- No. You need to understand what quality means for your project — fast page loads, correct data, secure access — and then ask AI to build the automated checks. You define the criteria and read the summaries. AI handles the implementation of the tests, audits, and monitoring dashboards.

- How do skills improve quality over time?

- Skills are reusable process definitions that AI agents follow. Each time a skill runs and you discover an issue, you update the skill to handle that case. After many iterations, the skill captures edge cases and best practices that would be impossible to define upfront. This creates compounding quality — each failure makes the process better rather than just being fixed in isolation.