What is a Change Request in AI development?

A Change Request, or CR, is a written plan that describes what you want to change, why it matters, and how the change will be verified. In traditional software teams, this kind of planning document might be called a ticket, a spec, or a design doc. In AI-assisted development, the CR plays a more critical role: it is the primary mechanism through which a non-coder controls the output of an AI coding agent.

Every CR follows a consistent format. It includes a summary of the problem or feature, a proposed solution with specific files and changes listed, scope boundaries that define what is not included, and a verification plan. The AI writes the CR. You review it at the level of intent and logic — does this plan make sense? Does it match what I asked for? — without needing to evaluate the technical details.

This is the core shift in AI-assisted building: you replace craft control with process control. You do not verify quality by reading code. You verify quality by ensuring that every change goes through a structured planning process before implementation begins.

In practice, I never look at the format of the CR itself. I have no idea what the headings are or how the sections are structured. What matters is that the structure exists and is enforced consistently. The CR is written for the AI to read on future sessions — not for me.

The CR workflow: plan, review, implement

The workflow for ai development change requests has three stages, and each one matters.

Stage 1: Write the plan. You describe what you want — a new feature, a bug fix, a refactor — in plain language. The AI produces a formal CR document with the problem statement, proposed solution, list of files to modify, and verification steps. This is where most of the thinking happens. I typically spend several iterations refining the plan before moving forward. Asking questions is more effective than giving instructions at this stage. Instead of dictating a solution, I ask the AI what the best long-term approach is, then question its reasoning.

Stage 2: Spawn a review agent. Before any CR is presented for approval, a separate AI agent reviews it. This is not the same session that wrote the plan — it is a fresh instance with no accumulated assumptions. The review agent evaluates the proposal for overengineering, missing edge cases, scope creep, and technical errors. In my experience, the first draft of a CR usually has significant issues. The review agent catches problems that the original session cannot see because it is too close to its own reasoning.

Stage 3: Implement. Only after the plan has been written, reviewed, and approved does the AI write any code. At this point, the implementation is guided by a document that has already been stress-tested. The result is cleaner, more focused, and far less likely to introduce unintended side effects.

This three-stage workflow is enforced through a governance file that lives in every project: a CLAUDE.md file that captures project-level instructions, workflow procedures, and architectural decisions. This file is not for human reading — it is instruction for the AI on how to behave within the project. It is the process architecture that makes AI development reliable.

The shortcut in action

Add email notifications for password resets. Follow the process.

Three words replace ten manual steps — for changes where the direction is clear. But not every change should be fully automated. The next section explains when this shortcut is right and when you need a checkpoint first.

Two tracks: fully automated and semi-automated

Not every change deserves the same level of oversight. A bug fix where the direction is obvious is different from an architectural decision that will shape the system for months. The CR workflow has two tracks, and choosing the right one is one of the most important judgments you make as a builder.

Fully automated — "follow the process." Use this for minor functions, bug fixes, and small scoped changes where the direction is clear and the risk is low. You describe the change and say follow the process. The AI runs the full ten-step pipeline autonomously. This is the right track when you already know what you want and the change is unlikely to create trade-offs you need to evaluate.

Semi-automated — "Write a CR and have it reviewed by 2 agents." Use this for larger decisions: architectural changes, UX flow redesigns, infrastructure choices, anything with trade-offs that affect long-term direction. You describe what you want, then say: Write a CR and have it reviewed by 2 agents. The AI writes the plan, runs the reviews, and presents a brief summary in the chat. This summary is your checkpoint.

Your job at this checkpoint is to read the summary carefully — not the CR document itself, but the summary the AI presents on screen. If anything creates dissonance or you do not fully understand it, ask about it. The right questions at this stage are high-level and goal-oriented: Will this ensure a robust high-availability service? Will this create the best user experience? Will this flow drive revenue effectively? Is this the approach with the fewest long-term trade-offs?

Only after you are satisfied do you say follow the process to authorize the remaining steps — implement, audit, changelog, commit, push. The semi-automated track gives you a strategic checkpoint without requiring you to manage every step manually. It is the middle path between full automation and step-by-step oversight.

When to use which track:

- Fully automated: Bug fixes, config changes, small features with obvious implementation, mechanical refactors, changelog updates

- Semi-automated: New architecture, API design, UX flow changes, infrastructure decisions, payment or security integrations, anything where choosing wrong now creates expensive rework later

The semi-automated checkpoint

Redesign the session persistence layer to support horizontal scaling. Write a CR and have it reviewed by 2 agents.

The AI writes the plan, reviews it, and presents a summary. You read the summary, ask goal-oriented questions, and only then say 'follow the process' to authorize implementation.

The full instruction — copy and adapt

Below is the actual instruction block that lives in my project's CLAUDE.md file. This is the text the AI reads at the start of every session. Copy it, paste it into your own governance file, and adapt the details to match your workflow.

This instruction is a living document. It started as six steps and grew to ten as I discovered failure modes in practice. Your version will evolve differently. The important thing is that every step exists for a reason — and those reasons are explained in the next section.

CLAUDE.md instruction — copy and adapt

## No Code Without a Reviewed Plan

No code changes may be implemented without a reviewed plan.

### Two tracks: Fully automated vs Semi-automated

Fully automated — "follow the process": For minor functions,

bug fixes, and small scoped changes where the direction is clear.

The user says "follow the process" and the AI runs the full cycle

autonomously.

Semi-automated — "Write a CR and have it reviewed by 2 agents":

For larger decisions, architectural changes, features with

trade-offs, or anything that affects long-term system direction.

The AI writes the CR, runs reviews, and presents a brief summary.

The user reads the summary carefully — this is the checkpoint to

ask goal-oriented questions ("Will this ensure high availability?"

"Will this create the best UX?"). Only after satisfaction does

the user say "follow the process" to authorize implementation.

When to use which track:

- Fully automated: Bug fixes, config changes, small features,

mechanical refactors

- Semi-automated: New architecture, API design, UX flow changes,

infrastructure decisions, anything with trade-offs

Shortcut — "follow the process": When the user says "follow the

process", this pre-authorizes the full cycle autonomously without

pausing for approval at each gate:

1. Write a CR — Create a Change Request document per the CR

Authoring Guide using the template

2. Review — Spawn review agent(s) to evaluate the CR (checks for

overengineering, correctness, scope, side effects). For

non-trivial changes, spawn 2 review agents in parallel for

independent assessments.

3. Iterate to confidence ≥ 8 — If any reviewer scores confidence

< 8: incorporate feedback, update the CR, and re-review.

Repeat up to 2 rounds. If confidence still < 8 after 2

re-review rounds, stop and ask the user what to do.

When incorporating reviewer suggestions to simplify, reject

simplifications that would compromise the user's core

objectives, user experience, robustness, or solution elegance.

4. Implement — Write the code changes described in the CR

5. Audit implementation — Spawn audit agent(s) to verify the

implementation matches the CR and check for bugs. For

non-trivial changes, spawn 2 audit agents in parallel. Fix

any bugs found, then re-audit if fixes were non-trivial.

6. Update changelog — Add entries under [UNRELEASED] in changelog

7. Update architectural docs — If the change affects architecture,

APIs, or system design, update the relevant docs

8. Update CR status — Change Status: Draft → Status: Implemented

9. Commit — Use conventional commits (feat:, fix:, docs:),

reference CR-ID. The commit MUST include the CR status update.

10. Push — Push to trigger deployment

Trivial vs non-trivial: A change is trivial if it touches ≤ 2 files

with straightforward, mechanical edits (e.g., config change, typo

fix, single-line bug fix). Everything else is non-trivial and gets

2 agents per review/audit round.

Review Process

After writing any plan, spawn review agent(s). For non-trivial

changes, spawn 2 agents in parallel. Each evaluates:

- Confidence score (1-10)

- Check for overengineering

- For bugs: validate root cause analysis

Iterate to confidence ≥ 8. If any reviewer scores < 8: incorporate

feedback, update the plan, re-review. Up to 2 re-review rounds.

If still < 8, ask the user. When incorporating reviewer suggestions

to simplify, reject simplifications that would compromise the user's

core objectives, user experience, robustness, or solution elegance.

Audit Process

After implementing code changes, spawn audit agent(s). For non-trivial

changes, spawn 2 agents in parallel. Each checks:

- Implementation matches the CR specification

- No introduced bugs, regressions, or missed edge cases

- Code quality and consistency with existing patterns

Fix issues found. Re-audit if fixes were non-trivial. Paste this into your project's CLAUDE.md governance file. Adapt the specifics — CR template paths, commit conventions, deployment triggers — to match your workflow.

Why each step exists — the rationale

Every step in the ten-step pipeline was added because its absence caused a real problem. Understanding the rationale helps you decide which steps matter for your project and which you might adapt.

Step 1: Write a CR. Without a written plan, the AI jumps straight to code. The code may be technically excellent but solve the wrong problem or expand scope beyond what you intended. The CR forces written thinking before implementation — the single highest-leverage quality intervention available.

Step 2: Review with an independent agent. The session that wrote the plan cannot see its own blind spots. A fresh agent with no accumulated assumptions catches overengineering, scope creep, and logical errors that the author is too close to notice. This is the same reason traditional code review exists — but automated and available in seconds.

Step 3: Iterate to confidence ≥ 8. Without a quality threshold, reviews become rubber stamps. The agent says "looks good" and moves on. A numeric confidence score forces the reviewer to quantify its assessment. Below eight means the plan needs work. This creates a genuine quality gate rather than a ceremonial checkpoint.

Step 4: Implement. By now, the implementation is guided by a plan that has survived independent scrutiny. The code is focused, scoped correctly, and aligned with what you actually asked for.

Step 5: Audit implementation. This step was added after discovering that implementations sometimes drift from the plan. The code compiles and passes tests but does not match the CR specification. Post-implementation audit catches execution errors that pre-implementation review cannot see — because the code did not exist yet when the plan was reviewed.

Step 6: Update changelog. Without mandatory changelog entries, the AI makes silent modifications. It fixes what you asked for and quietly changes three other things it thought were improvements. The changelog makes every change visible.

Step 7: Update architectural docs. AI sessions do not carry memory between conversations. If the architecture changes but the documentation does not reflect it, the next session works from outdated context and makes decisions based on wrong assumptions. Keeping docs current is not bureaucracy — it is how AI maintains institutional knowledge.

Step 8: Update CR status. Without this step, completed CRs remain in Draft status indefinitely. When you have dozens of CRs, you cannot tell which changes were actually implemented. Closing the loop from Draft to Implemented is a two-second edit that prevents confusion across sessions.

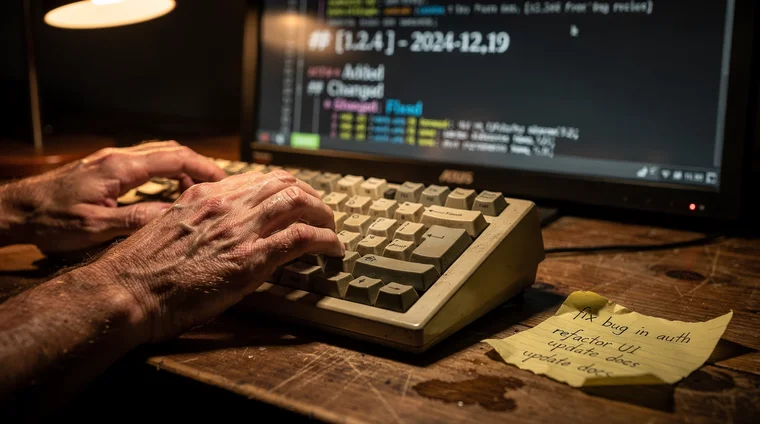

Step 9: Commit with conventional format. Conventional commits (feat:, fix:, docs:) with CR references create a searchable history. When something breaks, you can trace from the error to the commit to the CR to the plan to the rationale. This chain of accountability replaces the need to understand the code.

Step 10: Push. Triggering the deployment pipeline is the final step. Separating it from the commit is deliberate — it creates a clear boundary between "code is ready" and "code is deployed." In practice, the push triggers CI/CD, and any deployment issues surface through monitoring rather than manual verification.

Changelogs and the accountability trail

Every commit in an AI-assisted project must update the changelog. This is a non-negotiable rule enforced through the project governance files. The changelog categorises every change — Added, Changed, Fixed, Performance, Docs — and creates a human-readable record of what happened and when.

Why does this matter so much? Because when you are not reading the code, the changelog is your window into what the AI actually did. If something breaks after a deployment, the changelog tells you exactly which changes were included. If a feature is not working as expected, you can trace back to the specific commit and its associated CR.

The changelog also prevents silent modifications. Without this rule, an AI agent might fix one thing and quietly change three other things it thought were improvements. The mandatory changelog requirement forces every change to be declared explicitly. This is especially important when running multiple parallel sessions, where several AI agents may be working on the same codebase simultaneously.

I never read the changelog entries in detail. I scan them for anything that looks unexpected — a change I did not ask for, a scope that seems too broad, a fix in an area I did not know had a problem. The changelog is a signal system, not a document I study.

Automated reviews that catch overengineering

AI coding agents have two failure modes. The first is defaulting to quick fixes that create technical debt — patching symptoms instead of solving root causes. The second is overengineering — building elaborate abstractions, adding unnecessary layers, and expanding scope far beyond what was requested.

The spawned review agent is the primary defence against both. When a review agent evaluates a CR, it specifically looks for bloat: unnecessary complexity, features that were not requested, abstractions that add layers without adding value. A good review agent will strip a proposal down to its essential elements and flag anything that looks like scope creep.

This creates a productive tension. I push upward from quick fixes by asking the AI for the best long-term solution for the user. The review agent pushes downward from overengineering by identifying and removing excess. The result lands in the middle: solutions that are robust enough to last but simple enough to maintain.

This balance does not require understanding the code. It requires setting the right criteria — user value and system quality — and letting the process do the trimming. As Anthropic's documentation on Claude emphasises, clear instructions and well-defined evaluation criteria are more effective than trying to micromanage every output detail.

Parallel bug fixing at scale

One of the most powerful applications of ai development change requests is parallel bug fixing. This is where the fully automated track shines. When I have a list of ten bugs to resolve, I do not fix them sequentially. I open five or ten separate AI sessions, paste a bug description into each one, and type three words: follow the process.

That single phrase is a shortcut defined in the project governance files. It pre-authorises the full ten-step cycle: write a CR, review it, iterate to confidence, implement, audit the implementation, update the changelog, update architectural docs, update the CR status, commit, and push. Each session runs the entire workflow autonomously. I monitor progress by checking which sessions have completed and scanning the changelogs for anything unexpected.

This approach works because the CR workflow is deterministic. Every session follows the same steps in the same order. The review agent catches the same categories of errors regardless of which session spawned it. The changelog requirement ensures nothing happens silently. The process architecture is what makes the parallelism safe — without it, five simultaneous AI agents modifying the same codebase would be chaos.

The key constraint is practical: parallel sessions should ideally not work on the same file at the same time, to avoid merge conflicts. Beyond that, the only limit is how many sessions your AI development setup can support. With multiple Claude Max subscriptions, each running several sessions, a single person can have the output capacity of dozens of developers — all governed by the same structured change request process.

A 2024 study from the MIT Sloan School of Management found that highly skilled workers using AI tools saw significant productivity gains, with some tasks completed up to 56% faster. The CR workflow described here takes that further: it is not about making individual tasks faster, but about safely parallelising entire workflows.

Ten bugs, ten sessions

Checkout flow crashes on iOS when payment amount exceeds $999. Follow the process.

Paste one bug description per session. All ten run in parallel, each following the full CR cycle autonomously.

Frequently asked questions

- What is a Change Request in AI-assisted development?

- A Change Request (CR) is a written plan that describes a proposed code change, including the problem, solution, affected files, scope boundaries, and verification steps. The AI writes the CR, and it must be reviewed by a separate AI agent before any implementation begins.

- Why do AI development change requests matter if the AI writes the code?

- Because the builder typically cannot read the code, the CR is the primary control mechanism. It ensures every change is planned, reviewed, and documented before implementation, preventing silent modifications, scope creep, and unintended side effects.

- What does 'follow the process' mean in AI development?

- It is a shortcut phrase that pre-authorises a ten-step quality cycle: write a CR, spawn a review agent, iterate to confidence, implement, audit, update changelog, update docs, update CR status, commit, and push. Use it directly for minor changes like bug fixes. For larger decisions — architecture, UX flows, infrastructure — use the semi-automated track instead: describe the change and say 'Write a CR and have it reviewed by 2 agents.' Read the summary, ask goal-oriented questions, then say 'follow the process' only after you are satisfied with the direction.

- How do changelogs work in AI-assisted projects?

- Every commit must include a changelog entry categorised as Added, Changed, Fixed, Performance, or Docs. This rule is enforced through project governance files and creates an accountability trail that lets the builder track what the AI actually did without reading the code.

- Can multiple AI sessions work on the same project simultaneously?

- Yes. Parallel bug fixing is one of the most powerful applications of the CR workflow. Each session runs the full plan-review-implement cycle independently. The key constraint is avoiding simultaneous edits to the same file to prevent merge conflicts.